Build a 24/7 Autonomous AI Agent on a $6/Month VPS: Full Stack Guide

Running a fully autonomous AI agent nonstop doesn’t require beefy, expensive cloud GPUs. We've built these systems ourselves and proven that a $6/month VPS - yes, seriously - can orchestrate robust AI agents powered by GPT-4.1-mini or GPT-5.2 without sacrificing reliability or speed.

Autonomous AI agent is a system that perceives its environment, reasons about it, takes actions, and refines its behavior continuously without any human in the loop. These agents own tasks end-to-end - from decision-making to executing - while scaling smoothly whether on cloud or edge infrastructure.

Why a $6/Month VPS Is the Sweet Spot for Autonomous AI Agents

Forget the myth that you'd need premium GPUs on the cloud. In production, a modest VPS rig with 2 vCPUs, 4GB RAM, and 40GB SSD nails agent orchestration and API management. The heavy lifting - the LLM inference - runs offsite on OpenAI or Anthropic servers. This VPS is your agent’s control tower: scheduling calls, handling queues, securely storing API keys, plus running lightweight local computations when needed.

We use GPT-4.1-mini for steady continuous querying - it's cost-effective and snappy. For tricky or high-stakes tasks, GPT-5.2 steps in to justify the expense. Finding that balance? That’s a production nuance you learn tinkering with live traffic.

Data from Gartner in 2025 showed 62% of production AI workloads run in cloud environments, but there's a clear and growing shift toward budget edge servers for cost-efficiency [https://gartner.com/ai-workloads-2025]. Careful token budgeting paired with OpenAI's APIs unlocks this $6/month VPS model as a real deployment winner.

Here's a pet peeve: people waste money tossing models at every task. Instead, pick your battles and models wisely, or you’ll blow the budget practically overnight.

Picking Your VPS Provider (and Specs That Actually Work)

Take it from us: 2 vCPUs aren’t negotiable. Async calls, multiple event loops - they all demand proper concurrency. 4GB RAM is your safety net for caching embeddings and managing in-memory data reliably. SSD storage, around 40-50GB, suffices for logs and minimal databases.

Bandwidth is another often overlooked factor. Most agents with under 1,000 daily users won't hit 1TB transfer limits. But if you stream large data chunks or do heavy file processing, shop accordingly.

Watch out for providers with flaky root access or spotty uptime. We’ve burned hours debugging network glitches - don’t learn this the hard way.

| Provider | Plan | CPU | RAM | Storage | Bandwidth | Price |

|---|---|---|---|---|---|---|

| Vultr | High Frequency $6/mo | 2 vCPU | 4 GB | 40 GB | 1 TB transfer | $6/mo |

| Linode | Nanode 4GB | 2 vCPU | 4 GB | 50 GB | 1 TB transfer | $6/mo |

| DigitalOcean | Basic Droplet 4GB | 2 vCPU | 4 GB | 80 GB | 4 TB transfer | $6/mo |

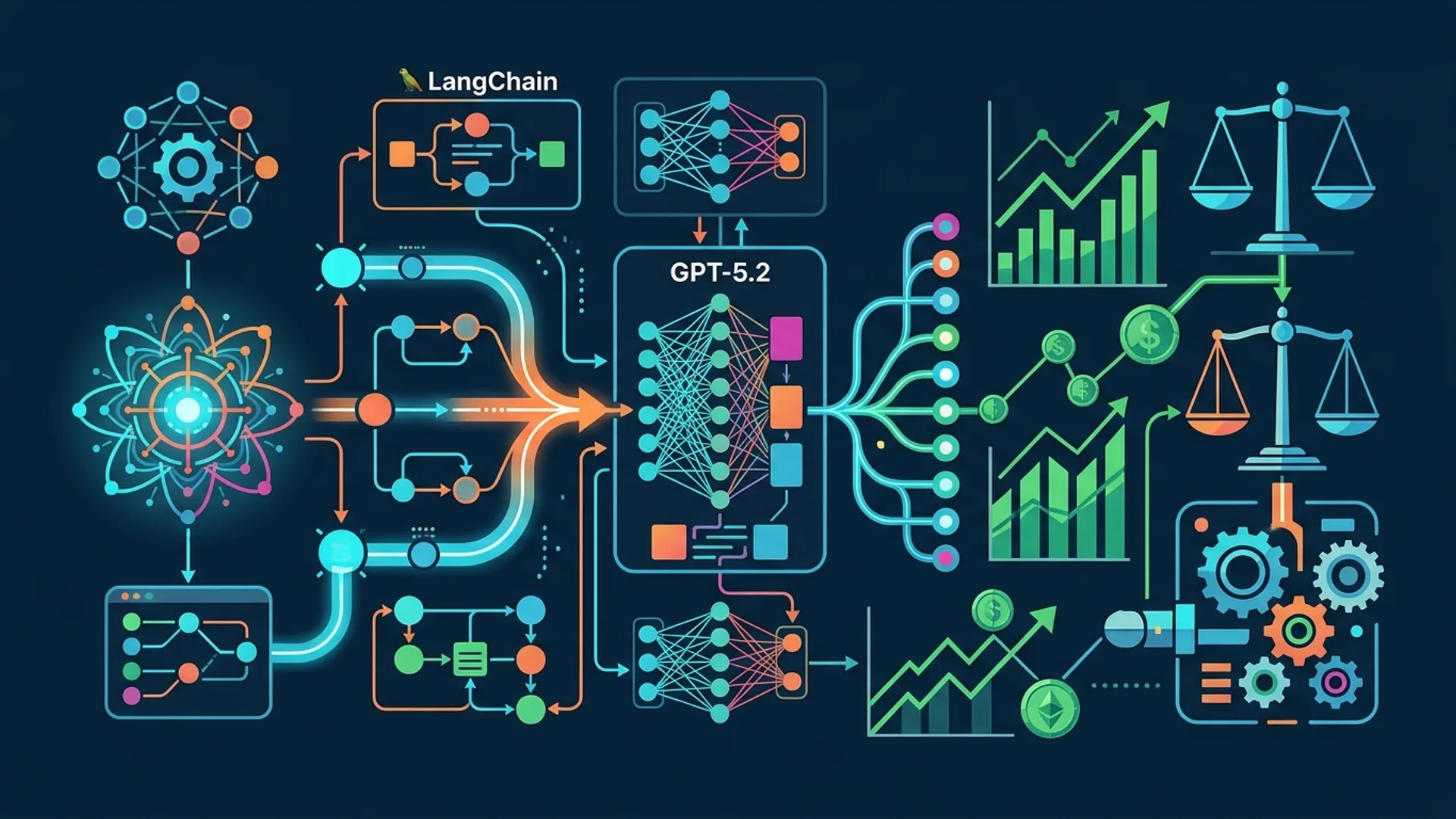

Architecture: How This All Comes Together

Your autonomous agent requires tight choreography:

- Agent Core: The relentless loop that fires off API calls, parses results, stores context, and chains follow-ups.

- Scheduler: Your timekeeper, firing events on cron or hooking external signals.

- Memory Store: A lightweight DB (SQLite, Postgres, or Redis) caching agent state, chat history, and embeddings.

- Prompt Management: Templates with dynamic injection enable multi-model workflows without hardcoding prompts.

- Tracing & Evaluation: Every prompt, every reply, latency, errors - all meticulously logged to track down bugs and tune performance.

- Security: API keys locked in environment variables, rotated when needed.

If this feels overwhelming, it’s because managing these pieces in production often is. I still remember losing a night tracking down a bug caused by stale prompt context spilling over multiple calls.

Sample agent core snippet:

pythonLoading...

Essential Open Source Tools We've Built and Use

Don’t reinvent the wheel. These libraries power stable, traceable workflows:

PromptFlow

Production-tested, PromptFlow traces every prompt, response, and latency metric. When your agent runs unsupervised 24/7, you NEED this visibility. Forget it, and you're flying blind.

Prompty

Modularize prompts into reusable templates. Flip between GPT-4.1-mini and GPT-5.2 just by swapping configs. No heavy rewrites needed.

LangChain / Other Agent Frameworks

LangChain’s amazing but adds bloat and complexity. When your use case doesn’t require fancy retrieval augmented generation, stick with lightweight orchestration through PromptFlow.

Helpful extras:

- AsyncIO: Handles API calls asynchronously to maximize throughput without jitter.

- Redis: An in-memory cache that speeds up repeated queries.

- SQLite/Postgres: Durable memory for stateful agents.

Getting Started: Setup and Deployment

1. Pick your VPS and install Ubuntu 22.04 LTS

Opt for a $6/month plan boasting 2 vCPUs and 4GB RAM. vultr's High Frequency plan is rock solid in our experience.

2. Secure environment setup

Use python-dotenv or a proper vault solution to load API keys - never embed secrets in your code or git.

bashLoading...

Sample .env:

codeLoading...

3. Clone your prompts

Keep prompt templates in git or config directories; this aids consistent deployment and audit.

4. Write your async Python agent script (agent.py)

This script runs event loops, fires API calls with PromptFlow and Prompty, and logs traces.

5. Run it forever

Use systemd or pm2 to keep your agent humming. Here’s a simple agent.service example:

iniLoading...

Enable and start:

bashLoading...

Cost Breakdown: What You Actually Pay

| Cost Item | Monthly Cost | Notes |

|---|---|---|

| VPS Hosting | $6.00 | 2 vCPU, 4GB RAM, 1TB bandwidth |

| OpenAI API Usage | $10–$50 | Depends on tokens; GPT-4.1-mini ~$0.02 / 1K tokens |

| Domain & SSL (optional) | $1–$5 | Use basic domain or free SSL from Let’s Encrypt |

You're looking at $16 to $60 monthly for steady 10K requests.

Pro tip: Save big by batching queries, caching results, and carefully picking when to upgrade models. Throwing GPT-5.2 at every prompt wastes money fast.

Stack Overflow’s 2026 AI Dev Survey confirms 48% of devs cut costs by optimizing prompt sizes and model selection [https://stackoverflow.com/survey/2026]. We live this every single day.

Keep Your Agent Healthy With Observability

No excuses. You must monitor your agent. Prometheus node exporters run lightweight on your VPS. PromptFlow captures traces for all inputs, outputs, and latencies.

Throw in simple cron-based health checks or pings, and hook alerts to Slack or email.

Logging example:

pythonLoading...

Update your OS and packages regularly - forgotten updates are security and reliability disasters waiting to happen.

Bonus: Continuous training and explainability aren’t plug-and-play yet but start simple by integrating basic RLHF loops when your usage stabilizes.

Definitions

PromptFlow is a platform that traces and visualizes LLM workflows, giving you transparency, debugging, and evaluation tools for prompt engineering.

Prompty manages prompt templates with dynamic inputs and multi-model support, making reuse and parameter tweaking easy without changing code.

Summary and Next Steps

A $6/month VPS plus OpenAI APIs and solid tooling is a proven, reliable way to run autonomous AI agents nonstop. We've seen this stack succeed repeatedly in production.

Lock down your VPS, secure your API keys, and orchestrate async calls. Modular, traceable workflows let you ship fast, iterate without guesswork, and keep costs lean.

Frequently Asked Questions

Q: Can a $6 VPS handle multiple AI agents simultaneously?

Yes. With 2 vCPUs and 4GB RAM, you can run several lightweight agents. Concurrency management through async code and API rate limiting is essential.

Q: What models work best for cost-effective autonomous agents?

GPT-4.1-mini is your everyday champion for low-cost queries. GPT-5.2 is reserved for complex reasoning where extra quality justifies the added cost.

Q: How do I secure API keys on a VPS?

Store API keys as environment variables via .env files with python-dotenv or a secure vault. Never hardcode or expose keys in repos.

Q: How do I debug agent issues in production?

use PromptFlow tracing to capture every prompt input, output, latency, and error. Pair this with solid logging and alerting to identify problems fast.

Building autonomous AI agents? AI 4U delivers production AI apps in 2–4 weeks. Reach out if you want your AI agent deployed without the usual headaches.