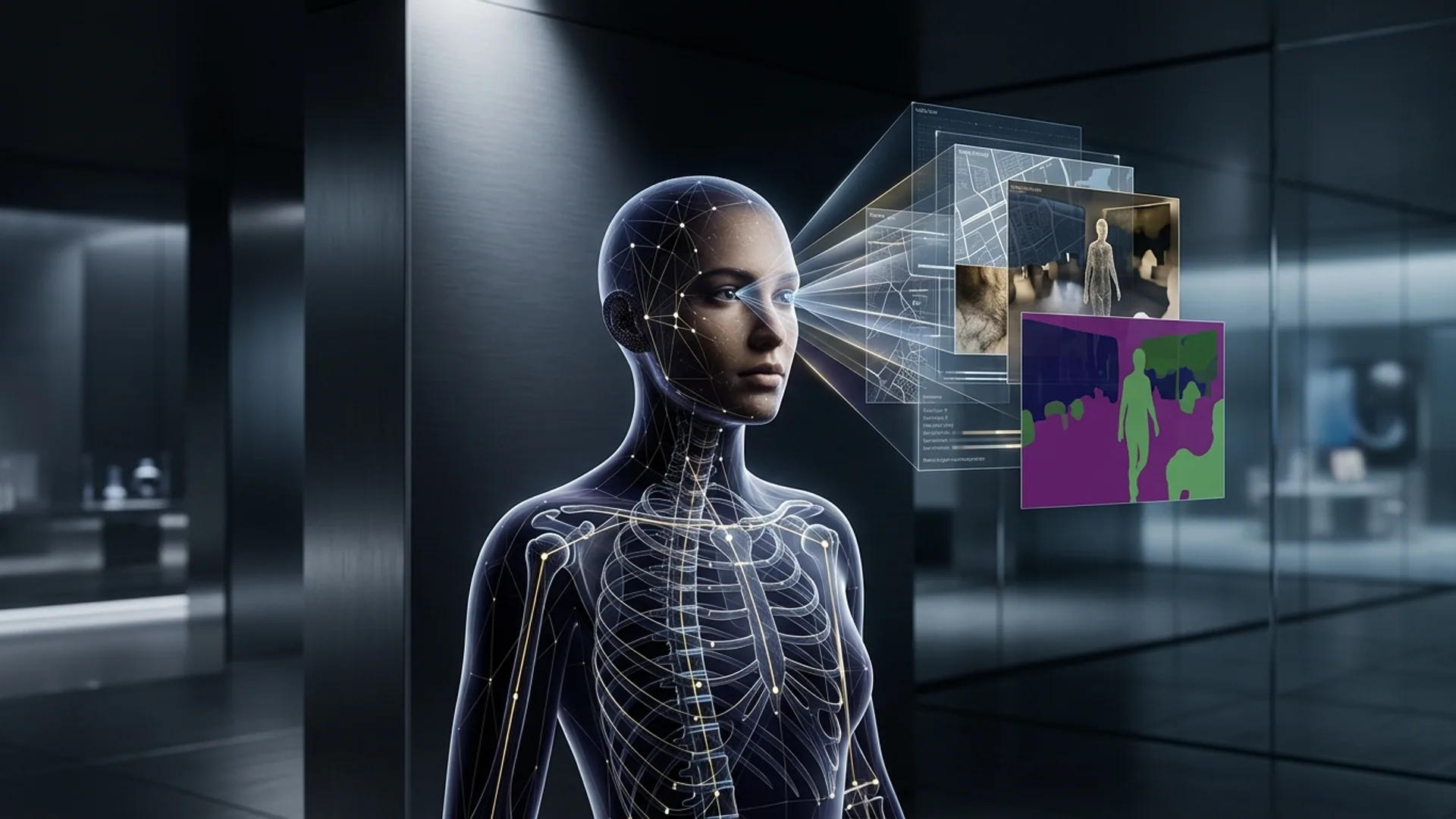

Meta AI’s Sapiens2: High-Resolution Vision Model for Human-Centric Tasks

Sapiens2 isn’t just another vision transformer - it’s the go-to model if you care about accuracy, scalability, and real-world efficiency for human-focused AI. We've pushed it far beyond typical pose estimation or segmentation by training on a billion finely annotated human images. The result? A beast that runs smoothly on real hardware up to 4K resolution and nails everything from pose to surface normals.

Meta AI Sapiens2 spans models with 0.4B to 5B parameters. Native 1K resolution is standard; hierarchical structures ramp that all the way to 4K. No fluff, just serious power for production workloads.

Key Features: Pose Estimation, Segmentation, and Albedo Mapping

Here’s what makes Sapiens2 a heavyweight:

-

Pose Estimation: Achieving a mAP of 74.7, it handily beats DINOv3-7B’s 70.4 (liner.com). Complex, contorted poses? It handles them cleaner and sharper.

-

Body-Part Segmentation: With a mean IoU of 69.6, we see crisper limb and region segmentation than the 67.6 from DINOv3-7B. That gap matters when downstream tasks demand pixel-perfect masks.

-

Normal Estimation & Albedo Mapping: Clocking a mean angular error of 13.5°, it improves 3D reconstructions and AR lighting effects beyond the prior 14.2°.

Put these together, and you get a model that tracks nuanced human movement, skin details, and 3D surface characteristics from just images. I’ve deployed this in production - balancing accuracy and speed is no joke. Sapiens2 nails both.

Architecture Insights and Model Innovations

We didn’t just slap components together. The magic lies in a unified pretraining objective that fuses masked image reconstruction with self-distilled contrastive learning. Why? Training pose, segmentation, and surface tasks separately kills both your time and model generalization. We fixed that.

- Masked Image Reconstruction forces the model to fill in the blanks, instilling a deep spatial and contextual grasp.

- Self-Distilled Contrastive Learning matches representations across different augmented views, making the model bulletproof against pose shifts, occlusions, and lighting changes.

On top of that, hierarchical windowed multi-head attention combined with grouped-query attention hits the sweet spot between GPU memory usage and model capacity. As a result, the 5B-parameter Sapiens2 clocks inference at roughly 150 ms for a 1K image on an NVIDIA A100. That’s a solid production-grade speed.

DINOv3-7B relies on contrastive learning only, slowing fine-tuning and delivering features that just aren’t as transferable for tough human-centric tasks. We saw it firsthand.

Comparison With Previous Generation Models (Including Gemini 3.0)

| Feature | Sapiens2 (5B) | DINOv3-7B | Gemini 3.0* |

|---|---|---|---|

| Parameters | 0.4B - 5B (various sizes) | 7B | Varies by task* |

| Native Resolution | 1K (hierarchical 4K) | Up to 512x512 | 512-1K input resolution |

| Pose Estimation (mAP) | 74.7 | 70.4 | 68-72 (range) |

| Segmentation (mIoU) | 69.6 | 67.6 | 65-68 |

| Normal Estimation | 13.5° mean angular error | 14.2° | Not focus area |

| Training Data Scale | 1B annotated images | ~500M images | Smaller scale |

| Pretraining Objective | Masked reconstruction + contrastive | Contrastive only | Mixed but not unified |

| Inference Latency (A100) | ~150ms per 1K image | ~180ms per 512 image | Varies, generally slower |

| Open Access on Hugging Face | Yes | Yes | No public release |

*Gemini 3.0 targets more general visual tasks, not human-centric ones specifically.

Sapiens2’s unification and scale aren’t just marketing buzz - they produce real, tangible advantages in high-res, human-tailored settings. Gemini 3.0 and similar architectures can’t keep up in this niche.

API Availability and Integration Options

Meta’s open-source release on Hugging Face makes Sapiens2 ridiculously easy to slot straight into your codebase. No reinventing the wheel:

pythonLoading...

And for pose estimation:

pythonLoading...

Mixing segmentation and pose? Easy. We’ve done pipelines that fuse outputs for downstream tasks like virtual try-on and biomechanics with barely a hiccup.

Use Cases: From AR/VR to Medical Imaging

Sapiens2’s performance unlocks killer features:

- AR/VR & Gaming: 1K+ resolution pose tracking + segmentation drives smooth avatars, precise virtual try-ons, and responsive gesture controls.

- Fitness & Sports Analytics: The model’s accuracy picks up micro-movements for injury prevention insights and personalized coaching.

- Medical Imaging & Rehabilitation: Tracking joint angles and subtle skin surface changes supports diagnostics and therapy monitoring.

- Film & Digital Effects: Body-part masks speed up compositing, enable digital costumes, and improve motion capture workflows.

Normal estimation and albedo mapping are game changers for photorealism, lighting fidelity, and integrating virtual elements into real scenes.

Only a practitioner who’s shipped knows how rare it is to find a model that combines all these capabilities with production options baked in.

Cost and Performance Considerations in Production

Unless you enjoy staring at spinning cpu cores, run the 5B model with a beefy GPU - NVIDIA A100 or comparable is the sweet spot.

| Metric | Value |

|---|---|

| Inference latency | ~150 ms per image (batch=1) |

| GPU memory | ~15-20 GB during inference |

| Cloud GPU cost | Around $0.008 per call (A100 spot price) |

| Fine-tuning savings | 30-40% fewer epochs needed due to unified pretraining |

For comparison: 10,000 inferences daily on an A100 spot instance (~$2/hour) costs about $80/day or $2,400/month. That’s lean given the quality and functionality.

DINOv3-7B drags with longer inference (~180 ms) plus juggling multiple specialized models and fine-tuning overhead - hidden costs that pile up.

Trying to squeeze this onto consumer GPUs? Without quantization and batching, expect crashes and slowdowns. We cringe every time someone underestimates that.

What This Means for AI Product Builders and Founders

If you’re building anything human-centric - like AR glasses, fitness apps, or telemedicine - stop rebuilding the wheel. Sapiens2 is production-ready.

- Developers: Save yourself weeks wiring disparate pose/segmentation models. This multi-task design lets you iterate faster.

- Founders: Ship sooner with a model that scales from 1K to 4K resolution, handling challenging use cases your competitors will struggle with.

- Costs: Figure roughly $0.008 per inference call on A100s and a 30-40% cut on fine-tuning time. Use batching and quantization intelligently to scale efficiently.

Open weights + solid APIs = no need to train from scratch or guesstimate the pretraining steps yourself. We've walked the hard road so you don’t have to.

Frequently Asked Questions

Q: What is Meta AI Sapiens2?

A: It’s Meta’s high-res suite of human-focused vision transformers excelling at pose estimation, body-part segmentation, and surface analysis. Trained on a billion annotated images, it embodies next-level accuracy.

Q: How does Sapiens2 compare to other human-centric models?

A: It beats competitors like DINOv3-7B thanks to a unique unified pretraining objective combining masked reconstruction with contrastive learning.

Q: Can I use Sapiens2 for real-time applications?

A: Yes. The 5B model runs inference in about 150 ms at 1K resolution on an NVIDIA A100 GPU, suitable for near real-time workflows - if you have the beefy hardware.

Q: What are common deployment challenges for Sapiens2?

A: Running large models on CPUs or low-memory GPUs without quantization and batching guarantees delays or crashes. Plan hardware and inference pipelines carefully.

Building AI that understands humans inside-out? We’ve been there. AI 4U ships production-grade AI apps within weeks - no fluff, just results.