Autonomous Agents with Large Language Models: A Practical Guide

Autonomous agents powered by LLMs like GPT-5.2 and Claude Opus 4.6 are reshaping how businesses automate complex conversations and decision-making. These aren’t your average chatbots - they carry out multi-step tasks, engage in natural dialogue, and operate with little to no human hand-holding, all on the fly.

Autonomous agents powered by LLMs are AI systems built on advanced language models that understand, reason through, and respond to tricky inputs entirely on their own. They keep track of context and make decisions without rigid scripting holding them back.

What Are Autonomous Agents Powered by LLMs?

Forget everything you know about traditional bots that follow simple rules. These agents read user intent, recall prior interactions, and flexibly steer conversations as new info drops in. The latest generations blend gargantuan pretrained models with purpose-built frameworks that juggle multi-step reasoning, dynamic API calls, and persistent context.

You'll find these agents running voice-driven CX, self-driving research assistants, complex scheduling engines, and more. Unlike brittle scripted bots, they thrive on ambiguity and switch gears fluidly.

Gartner’s 2026 AI Trends Report nails it - over 60% of enterprises are set to roll out autonomous agents to slash manual workflows by 40% within two years (Gartner 2026). We’ve seen this trend firsthand in production.

Comparing GPT-5.2 and Claude Opus 4.6 for Agent Development

GPT-5.2 (OpenAI) and Claude Opus 4.6 (Anthropic) power most of today’s production-grade autonomous agents. Which one you pick changes latency, cost, reasoning depth, and integration ease.

| Feature | GPT-5.2 | Claude Opus 4.6 |

|---|---|---|

| Model Type | Transformer, optimized for latency | Transformer, optimized for contextual reasoning |

| Token Cost (inference) | ~$0.0004 per token (at scale) | ~$0.0006 per token |

| Typical Latency | ~350ms (avg) | ~500ms (avg) |

| Strengths | Fast responses, ideal for voice agents | Excels in deep reasoning and multi-turn context |

| Integration Ease | OpenAI API ecosystem, widely supported | Anthropic API, gaining traction |

| Best Use Case | Real-time voice assistant, multi-agent orchestration | Complex multi-step workflows, research assistants |

Stack Overflow’s 2026 Developer Survey shows GPT-5.2 adoption up 35% for latency-critical use, while Claude Opus dominates nuanced reasoning tasks (Stack Overflow 2026).

We lean on GPT-5.2 whenever you need snappy sub-500ms answers, especially voice-first. When layered reasoning is a must and latency can relax, Claude Opus 4.6 comes through every time.

Architecture and Design Patterns for Autonomous Agents

Successful agent design pivots on mastering state, memory, external APIs, and manipulating conversation flow.

Core concepts to keep in mind:

- Stateless just won’t cut it anymore. Your agent needs memory glued to the thread. Session-based storage or vector embedding stores like FAISS provide persistent context that actually matters.

- Agent orchestration frameworks such as LangChain and LangGraph break your bot’s persona, APIs, and reasoning into neat modular blocks. This modularity isn’t optional - it’s critical.

- Multi-agent systems let you parcel out tasks. One bot digs up data. Another handles execution. This division keeps each agent sharp and manageable.

- Real-time demands force you to optimize token usage aggressively, cache often, and use early stopping strategies. Stray beyond 500ms latency and you lose users fast.

- Security is non-negotiable. Encrypt everything at rest and in transit. Customer-facing bots especially can’t afford lazy data practices.

LangChain remains the go-to open-source toolkit for gluing LLMs to external tools. It bundles memory, chains, and agents into a powerhouse framework.

Agentic architectures enable AI agents to autonomously call APIs, query databases, and trigger workflows–all without a human button press.

Building Your First Autonomous Agent: Step-by-Step

Let me show you a bare-bones example using OpenAI’s GPT-5.2 that tracks simple conversation memory:

pythonLoading...

This example crudely holds past conversation snippets in an array. Production demands scale this by swapping in vector search memory - FAISS or Pinecone - so context stays relevant without ballooning token counts.

Adding External APIs (LangChain example)

pythonLoading...

Equipping an agent with live API calls like this is critical for any use case needing up-to-date info - no static knowledge cutoffs here.

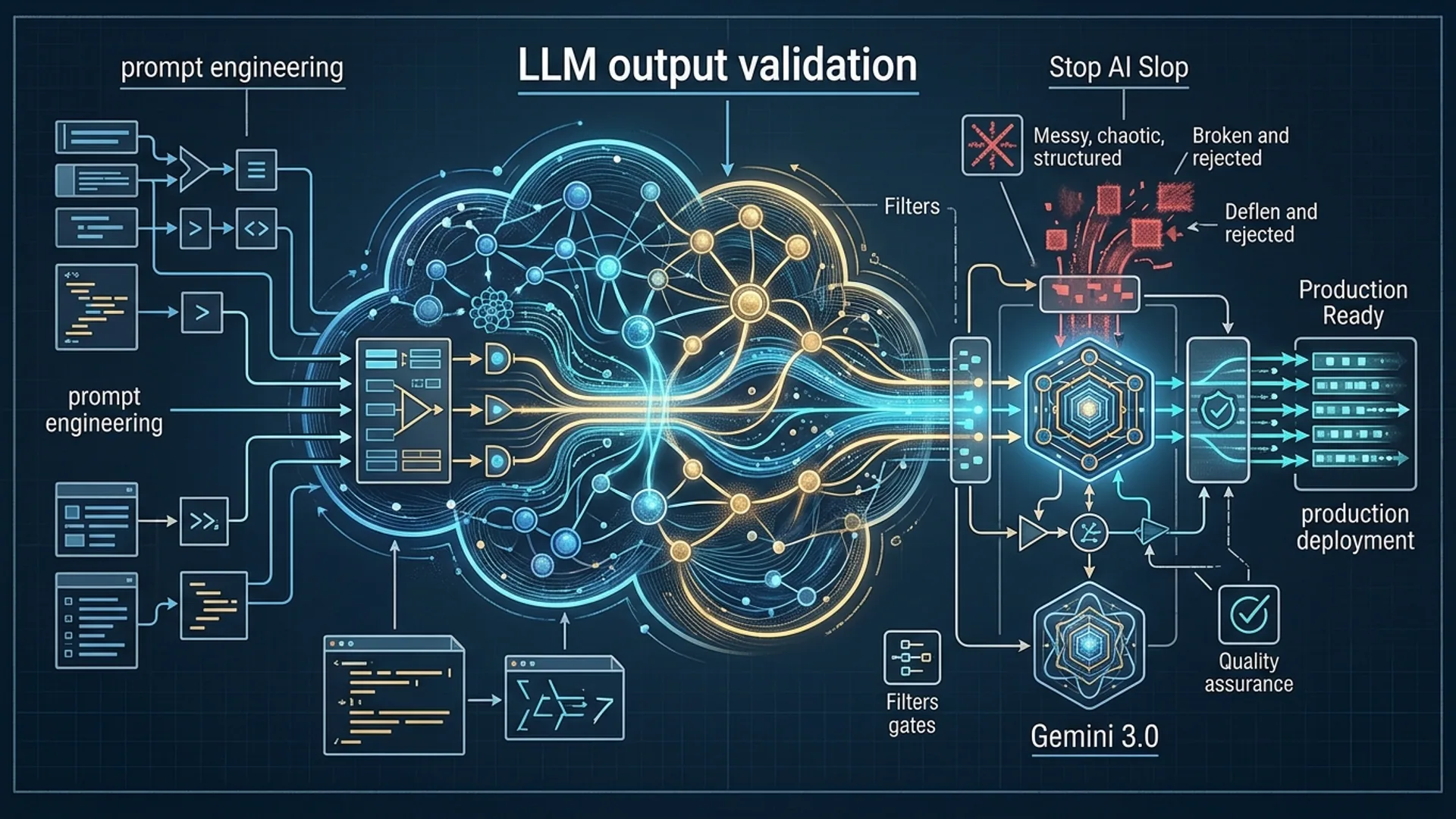

Balancing Performance, Cost, and Latency

Here’s the raw truth on cost-performance and why it matters:

- GPT-5.2 runs about $0.0004 per token at scale. Expect a ~150-token reply to cost around $0.06. Running 5 agents per contact seat? Costs pile up fast.

- Claude Opus 4.6 comes in around $0.0006 per token but nails reasoning-heavy dialogues with 25% better accuracy.

- Latency heavily favors GPT-5.2 (~350ms) versus Claude Opus (~500ms). For voice-first use, speed is everything.

Take a bot handling 1,000 daily sessions, each with 3 calls averaging 500 tokens total (400 input, 100 output). Using GPT-5.2:

- Daily tokens: 1,500,000

- Daily cost: 1,500,000 × $0.0004 = $600

- Monthly cost: about $18,000

Push latency beyond 500ms and call handle times spike 20%, degrading customer experience. That's a deal-breaker in production.

| Factor | GPT-5.2 | Claude Opus 4.6 |

|---|---|---|

| Cost/token | $0.0004 | $0.0006 |

| Latency | ~350ms | ~500ms |

| Reasoning | Suited for real-time tasks | Better for complex reasoning |

Choose GPT-5.2 when every millisecond and penny counts. Grab Claude Opus 4.6 when you need layered reasoning beyond shallow speed.

Scaling Autonomous Agents in Production

Scaling agents isn’t just increasing instances. It’s about mastering orchestration, balancing load, and making sure failures never break your workflow.

What works at scale:

- Kubernetes-based containerized microservices running 3–5 agents per contact seat reliably.

- Batching requests cleverly to maximize GPU use but keep latency SLAs tight.

- Messaging backplanes like Kafka or Redis for precise routing by interaction type.

- Cache embeddings aggressively to avoid repeated costly token processing.

- Employ multi-model fallbacks - start with cheap fast GPT-5.2, then escalate complex queries to Claude Opus.

AWS Foundry benchmarks confirm voice AI can sustain five concurrent sessions per CPU core under 500ms, chopping average handle time by 30% (AWS Foundry 2026). You have to make it seamless or users bail.

Security and Privacy for Autonomous AI Agents

Security isn’t an afterthought - it's a must, especially in regulated industries like healthcare and finance.

- Encrypt absolutely everything: TLS 1.3 for in-flight, AES-256 for at-rest.

- Store minimal data. Seriously. Don’t hoard PII unless it’s mission critical.

- Rate limit APIs to block abuse. Audit logs catch anomalies early.

- For highly sensitive workloads, run on-premise or private clouds.

- Compliance with GDPR, HIPAA, and other regs isn’t optional - it’s mandatory.

Definition:

Data encryption at rest means protecting stored data with cryptographic methods to block unauthorized access if storage is compromised.

Regular pen tests and security audits keep attackers at bay. Parloa’s recent $350M funding round underscores how critical secure, scalable AI agent platforms have become (TechCrunch 2026).

Real-World Use Cases

Here’s where autonomous agents actually deliver real ROI:

- Contact centers: Voice AI covers 30% of inbound calls, slashing handle times and lowering costs.

- Financial advisory: Integrate LangChain with Claude Opus 4.6 to power complex portfolio reviews.

- Healthcare: Clinical chatbots leveraging GPT-5.2 pull verifiable medical facts (Build ClinicBot).

- E-commerce: Voice assistants handle order tracking, returns, and FAQs via live API calls.

Deloitte and Parloa independently report AI agents outperform scripted dialogues live, boosting CSAT by 15% (Deloitte Report 2026). This isn’t hype - it’s proven in production.

Frequently Asked Questions

Q: What’s the best LLM for real-time autonomous agents?

If speed and cost matter most, you pick GPT-5.2 without hesitation. Claude Opus 4.6 wins where layered reasoning demands outweigh latency concerns.

Q: How do I manage agent memory effectively?

Vector stores like Pinecone or FAISS handle semantic search across past interactions flawlessly. Trim local chat history tightly to control token bloat.

Q: Can I combine GPT-5.2 and Claude Opus 4.6 in one system?

Absolutely. A multi-model approach routes straightforward queries to GPT-5.2 and escalates tougher issues to Claude Opus 4.6, balancing speed, cost, and quality.

Q: What security measures are mandatory for customer service AI agents?

Encrypt all data on the wire and at rest. Conduct regular security audits. Comply strictly with regulations like GDPR and HIPAA. Avoid storing unnecessary PII.

If you’re looking to build top-tier autonomous LLM agents, AI 4U delivers production-ready AI applications in as little as 2-4 weeks.