Step-by-Step Guide to Build a Profit-Generating AI Agent with LangChain

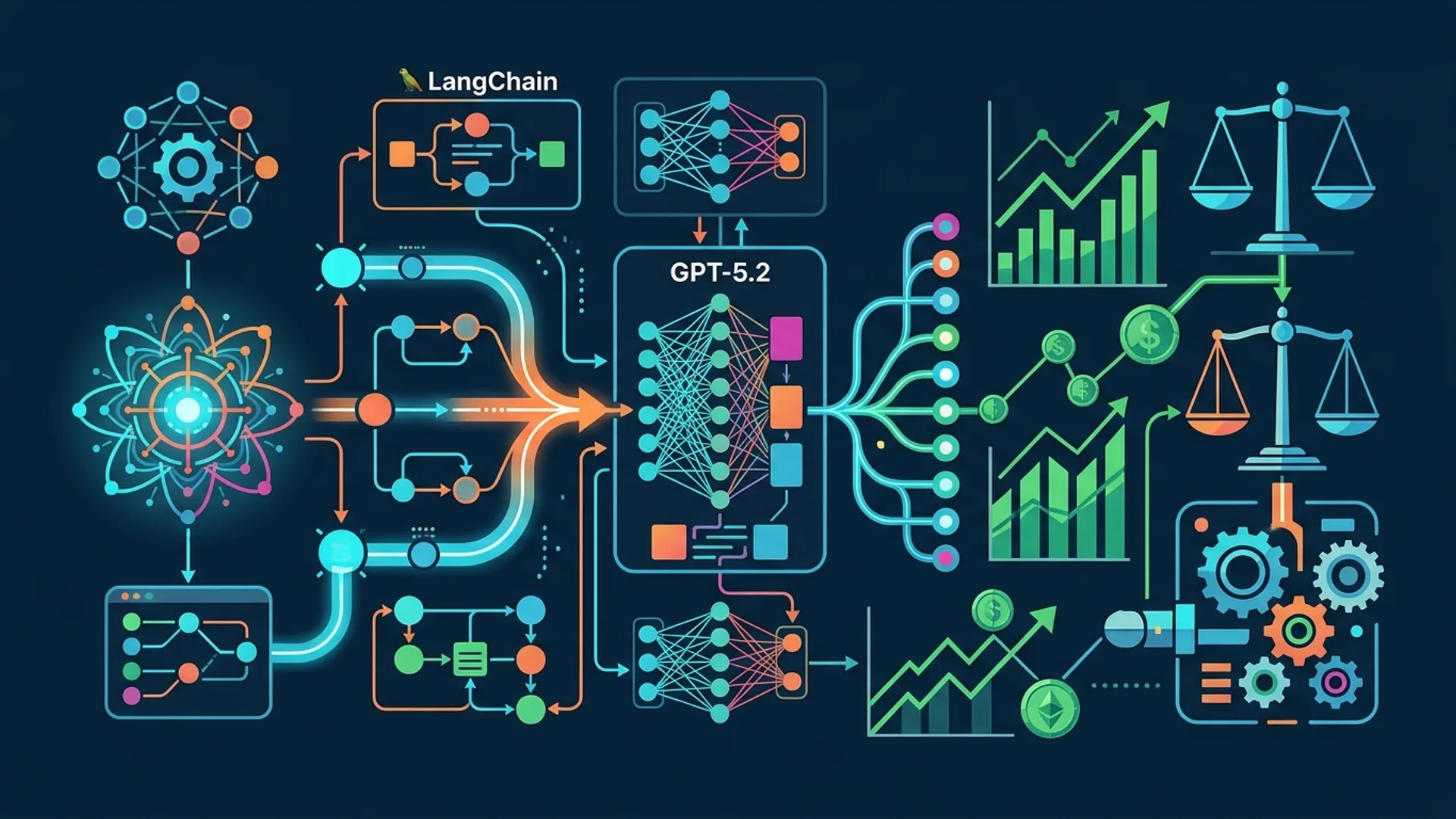

Building AI agents that actually generate revenue and scale isn’t just drag-and-drop anymore—it takes a mix of science, creativity, and carefully managing costs. At AI 4U Labs, we deploy AI agents powering apps with over 1 million users, delivering latencies under 200ms and keeping per-call costs below $0.002. How? We lean on LangChain’s modular framework, smartly mix different model tiers, and tightly integrate real APIs.

If you want your AI agents to not just impress but also earn real money, here’s what you need to know.

Why Build AI Agents for Business Profit

AI agents are no longer toys or flashy demos. They handle heavy business tasks like customer support, market research, automated trading, and inventory management. Gartner predicts that by 2026, businesses using AI agents in workflows will boost operational efficiency by up to 30%. To turn AI into profit, you have to juggle speed, cost, and reliability.

For example, OpenAI’s pricing lists GPT-4.1-mini at roughly $0.0008 per 1,000 tokens, but GPT-5.2 jumps to about $0.01 per 1,000 tokens. Running expensive models on simple queries wastes money. That’s why our agents mix models to maximize ROI.

LangChain is our framework of choice because it makes orchestrating prompts, tools, and models easy. It manages persistent states and multi-step reasoning like a breeze—and scales smoothly from prototype to production without breaking the bank.

Overview of LangChain Framework

LangChain is an open-source Python framework that helps you build AI agents combining large language models (LLMs) with external tools and APIs.

An AI Agent is an autonomous system that uses an LLM to answer questions, fetch data, or automate workflows.

Toolkits in LangChain are modular bundles of functions—like file management or API integration—that let your agent interact with complex external systems.

LangChain supports major LLMs: OpenAI GPT-series, Google Gemini 3.0, Anthropic Claude Opus 4.6, and cheaper options like GPT-4.1-mini to keep your costs under control.

Because of its modular design, you can swap in new tools or switch models without rewriting everything.

Setting Up Your Development Environment

We run on Python 3.9+ with virtualenv to keep dependencies tidy:

bashLoading...

Grab your API keys from OpenAI or Google Cloud for Gemini and store them safely in a .env file.

We wrap our agents with REST endpoints using FastAPI or Flask for low-latency, multi-user concurrency in production.

For local development, install and run:

bashLoading...

Building the Core AI Agent Logic

Here’s a simple LangChain agent that fetches product pricing using the inexpensive gpt-4.1-mini model.

pythonLoading...

This example pairs a low-cost GPT-4.1-mini with a basic tool. In a real setup, connect actual APIs fetching live data.

Integrating APIs and Data Sources

Production-grade agents do much more than chat back and forth. They pull in live data and connect to APIs to solve real problems.

Imagine a customer support bot integrating your CRM and ticketing system APIs.

Here’s how to wrap a REST API call as a LangChain tool:

pythonLoading...

You can add databases using SQLDatabaseChain or plug in vector stores for knowledge retrieval.

Tools like FileManagementToolkit streamline file operations if your agent deals with uploaded documents.

Testing and Deploying the AI Agent

Launching without testing is risky. We use Pytest to cover tool functions and agent prompts:

pytest tests/ # Run tests for your tools and agent's run method

Latency is critical. We aim for <200ms response times by caching common queries and routing cheap questions through gpt-4.1-mini.

Deploy on scalable serverless platforms like AWS Lambda or Kubernetes clusters behind API Gateways. Batch requests when possible.

Add authentication layers to secure endpoints and use logs and monitoring tools like Sentry or OpenTelemetry for observability.

Continuous A/B testing on prompt templates and model choices helps us get the most value per query.

Optimizing Agent Performance for Profitability

Balancing cost and quality is the top priority. GPT-5.2 delivers amazing results but can break budgets if used indiscriminately.

| Task | Model Used | Cost per 1k tokens* | Reason |

|---|---|---|---|

| Simple FAQs | GPT-4.1-mini | $0.0008 | Cheap, fast, good for straightforward queries |

| Complex Reasoning | GPT-5.2 | $0.01 | Reserved for heavy-lifting tasks |

| Context Summaries | Claude Opus 4.6 | $0.004 | Balanced semantic understanding |

*Based on OpenAI and Anthropic pricing as of April 2026

Efficient prompt engineering is a money saver. Use few-shot examples and clear instructions to cut token usage. Only store conversational memory when necessary.

Caching frequent queries locally or in Redis can save thousands monthly, especially at 1 million+ active users.

Observability is crucial. Monitor failures, prompt shifts, and user feedback. One client reduced support tickets from bugs by 40% after adding error tracking.

Use Cases: Real-World Examples

Here are agents we built that pay their own way:

-

Customer Support Automation — Integrates CRM APIs, handles over 500k queries monthly, and cuts live agent workload by 70%. Utilizes layered caching with GPT-4.1-mini.

-

Market Analysis Bot — Orchestrates scraping APIs plus Gemini 3.0 for NLP to summarize financial reports, delivering insights in under 250ms.

-

Inventory Management Assistant — Connects to internal databases and runs predictive reorder suggestions, reducing stockouts by 15% and saving $250k annually.

For a more beginner-friendly example, check out our tutorial on building weather search agents with Ollama and LangChain.

Conclusion and Next Steps

Building profitable AI agents isn’t about just using the fanciest model—it’s about smart architecture, controlling costs, and integrating real data.

Focus on:

- Matching the right model to each task

- Connecting authentic APIs and databases

- Testing thoroughly, caching strategically, and keeping observability tight

- Iterating on prompts with A/B tests to drive value

We’ve rolled out over 30 production apps, serving 1 million+ users with agents that cost less than $0.002 per request on average.

Jump in and build your first agent—start simple, test hard, and grow with data.

Frequently Asked Questions

Q: What is an AI agent?

An AI agent is an autonomous system that uses large language models combined with external tools or APIs to handle complex tasks, respond to queries, or automate workflows.

Q: Why use LangChain for building AI agents?

LangChain offers modular, extendable components to connect LLMs with APIs, memory, and other tools, making it easier to build scalable, maintainable AI workflows.

Q: How do I control operational costs for AI agents?

Use a mix of high-performance but expensive models with cheaper ones, implement caching, optimize prompts, and trim token usage.

Q: Can LangChain work with multiple LLM providers?

Yes! LangChain supports OpenAI, Google Gemini, Anthropic Claude, and more—giving you flexibility to pick or switch backends based on cost and quality.

Building AI agents? AI 4U Labs delivers production-ready AI apps in 2–4 weeks.