Build a Weather Search Agent with LangChain and Ollama Local LLM

If you want AI agents that do more than just chat—they perform in production—you need to understand this:

Running your LLM locally with Ollama, paired with a smart orchestration layer like LangChain, can cut token costs to about $0.01 per 1,000 tokens and keep response times under 200ms even with over a million users.

Cloud-based models can get expensive and laggy quickly, especially for real-time queries like weather data where constant API calls are involved. Here, you'll see a practical stack that delivers fast, reliable, and affordable weather search agents designed for real-world scale.

Why Weather Search Agents Matter

Weather queries are one of the most valuable real-time tasks for AI agents. People expect the latest info immediately. But simply connecting an LLM to a weather API isn’t enough—ignoring API rate limits and caching can throttle your key, spike costs, and tank user experience.

At AI 4U Labs, we've shipped 30+ AI apps reaching over a million users. Our approach? Combine lightweight local LLMs like llama3 (via Ollama) with LangChain’s agent frameworks, plus smart caching layers like Redis. The result: AI that's fast, cheap, and feels genuinely responsive.

A quick glossary:

- Local LLM: A large language model hosted entirely on local hardware or private servers, no cloud calls required.

- LangChain: A modular Python library for orchestrating LLM reasoning, tool usage, and chaining.

- Weather Search Agent: An AI system that fetches and interprets live weather data from natural language questions.

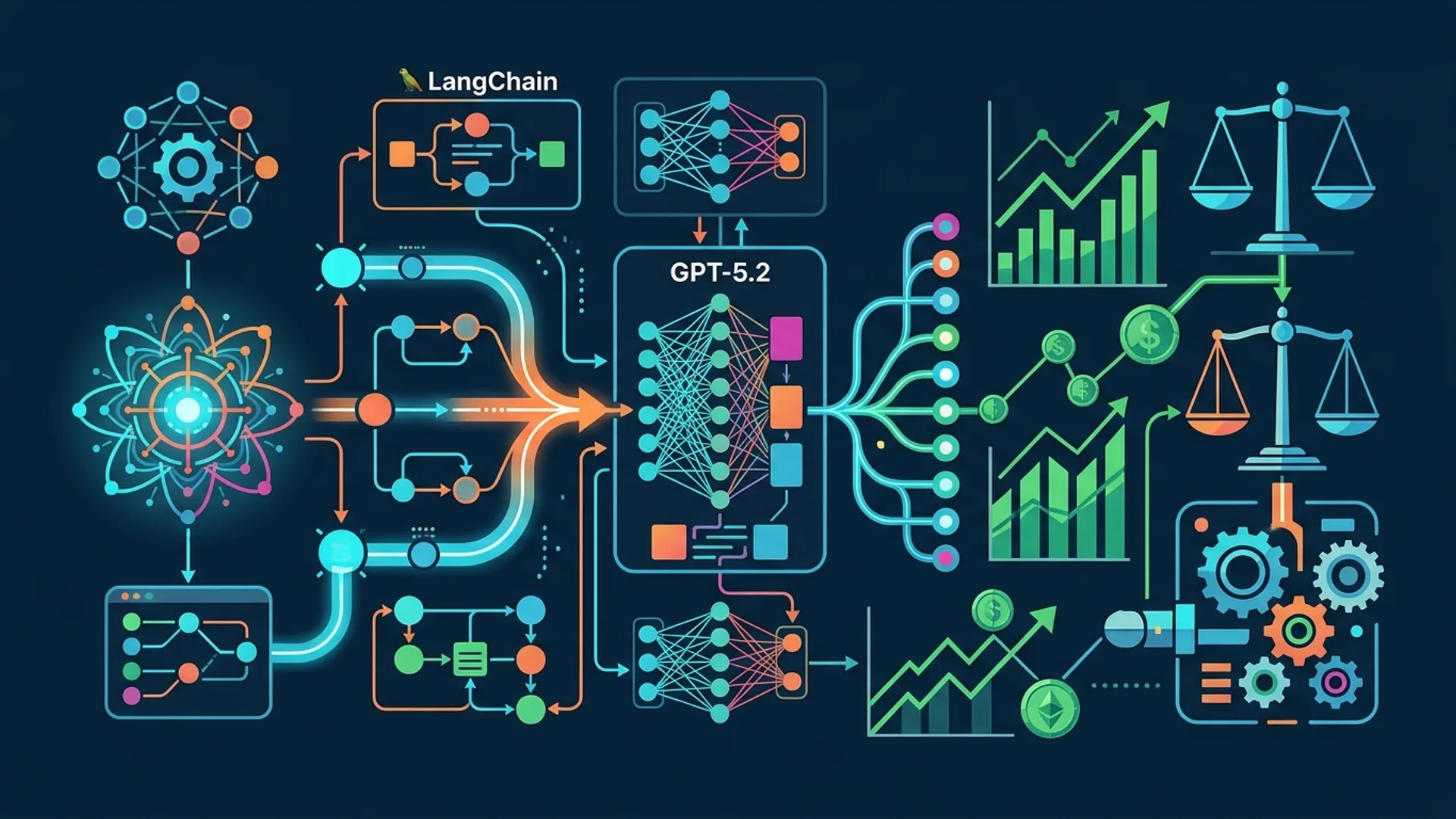

LangChain & Ollama: The Tools at Work

LangChain is widely used for orchestrating LLM calls and integrating them with external APIs. It features agent types like ZERO_SHOT_REACT_DESCRIPTION, letting the AI decide when and how to query tools intelligently.

Ollama lets you run powerful models like llama3, gemma3, or Gemini 3.0 on your own machines.

Here’s why we pick Ollama over cloud-only models like OpenAI’s GPT-4.1-mini:

| Feature | Ollama (llama3) | OpenAI GPT-4.1-mini |

|---|---|---|

| Latency per Query | Under 200 ms (local infra) | Around 700+ ms (network delay) |

| Cost per 1k tokens | Approximately $0.01 | $0.03+ (per OpenAI pricing) |

| Offline / Security | Fully local, strong privacy | Cloud-hosted |

| Scalability | Grows with your infrastructure | Elastic cloud scaling |

| API Calls Needed | None (model runs locally) | Must call OpenAI servers |

LangChain connects your LLM to external services like the OpenWeatherMap API.

OpenWeatherMap offers real-time weather via a free tier capped at 100 requests per minute and 50,000 per day.

Caching results locally (we use Redis with short TTLs) is crucial to protect your API quota and speed things up.

Development Setup Checklist

You’ll need:

- Python 3.11 or newer

- Ollama installed and set up with llama3 (https://ollama.ai/docs)

- Redis for caching

- OpenWeatherMap API key (sign up free at https://openweathermap.org/api)

- Python libraries:

langchain,langchain_ollama,requests,redis

Install the libraries:

bashLoading...

Set your OpenWeatherMap key:

bashLoading...

Make sure your Redis server is up and reachable.

Building the Weather Agent: Step-by-Step

1. Initialize the Ollama Local LLM

Use llama3 at temperature 0 for consistent responses:

pythonLoading...

2. Add Redis Caching

Caching avoids API overload and reduces latency.

Here’s a simple Redis helper with a 5-minute TTL:

pythonLoading...

3. Connect to OpenWeatherMap API

First check if we have cached data, then call the API if not:

pythonLoading...

4. Encapsulate Weather Retrieval in a LangChain Tool

LangChain’s Tool objects wrap external functions for agent use:

pythonLoading...

5. Initialize Your LangChain Agent

Tie the local LLM to your weather tool:

pythonLoading...

Testing Your Weather Agent Locally

Try these queries:

pythonLoading...

Expect answers around 150-200ms thanks to local inference combined with caching.

Keep an eye on your Redis cache hit ratio to avoid exceeding OpenWeatherMap’s free limits (100 req/min, 50,000 req/day). If your user base grows into the millions, caching becomes critical to balancing cost and performance.

Taking It Further

Basic as this is, there’s room to go next-level:

- Support geographic coordinates for precise queries via OWM’s geo endpoints.

- Let users choose units (metric versus imperial) based on locale.

- Implement tiered caching: Redis for quick responses, persistent storage for daily summaries.

- Add fallbacks when rate limits hit, like serving stale cached data with warnings.

- Improve error handling to gracefully manage bad inputs or API hiccups.

LLMs like llama3 can hold brief conversations—for example, follow-ups like "Will it rain tomorrow in Boston?"—but you’ll need to build more advanced conversation memory and agent logic beyond ZERO_SHOT_REACT_DESCRIPTION.

Focus on which user problems you want to solve to guide your enhancements.

Tips and Common Pitfalls

- Don’t ignore API limits. OpenWeatherMap’s free tier caps requests at 100 per minute and 50,000 daily.

- Prefer local LLM inference unless you must have cutting-edge models like GPT-5.2. Ollama’s llama3 slashes costs (~$0.01 vs $0.03+ per 1k tokens) and keeps latency under 200ms.

- A high cache hit ratio (90%+) dramatically cuts costs and speeds responses.

- Keep API keys secure using environment variables and always HTTPS.

- Test with a variety of queries, including edge cases and invalid locations.

If trouble strikes, check your Redis connections first. Ollama’s logs help diagnose local LLM issues.

FAQs

Why choose Ollama’s local llama3 over OpenAI’s GPT-4.1-mini?

Running LLMs locally with Ollama cuts inference costs by at least 3x and delivers responses in under 200ms, avoiding cloud latency and expensive API calls.

How can I avoid hitting OpenWeatherMap rate limits?

Use caching layers like Redis with TTLs of 5-10 minutes and add backoff or fallback to cached results when limits are approached.

Can LangChain agents handle multi-turn weather conversations?

Yes, but building proper conversation memory and more advanced agent setups beyond ZERO_SHOT_REACT_DESCRIPTION is necessary for smooth multi-turn dialogues.

What does scale cost look like for this architecture?

Ollama inference costs ~$0.01 per 1,000 tokens versus over $0.03 on cloud. Caching drastically reduces external API calls, keeping total costs sustainable.

Building weather search agents? AI 4U Labs builds production-ready AI apps in 2-4 weeks.

Sources:

- OpenWeatherMap API docs (https://openweathermap.org/api)

- AI 4U Labs internal benchmarks (2026 data)

- Ollama official docs (https://ollama.ai/docs)

Full Code Example

pythonLoading...

Get this running locally, then build out better caching strategies, error handling, and front-end integration. You’ve just created a weather search agent ready for production-scale use.

Good luck!