Fixing RAG System Failures Using Multimodal AI APIs: A Guide

Almost every RAG system you see struggles because it forgets one key thing: retrieval alone doesn’t cut it. If you rely on vector search without safeguards, or overload your LLM prompts with irrelevant context, your AI app simply won’t perform well. At AI 4U Labs, we tackled these RAG system failures by blending multimodal AI APIs with hybrid search techniques and tight monitoring. The results? Production-grade reliability, latency under 150ms, and a 90% cut in costs by running inference locally.

This guide skips the hype and goes straight to what matters: what a RAG system is, why it trips up, how multimodal AI APIs fill the gaps, plus real code to connect Ollama’s OpenAI-compatible local LLM in scalable, secure setups. We’ll also share the exact metrics to watch so silent failures don’t sneak in and ruin your midnight.

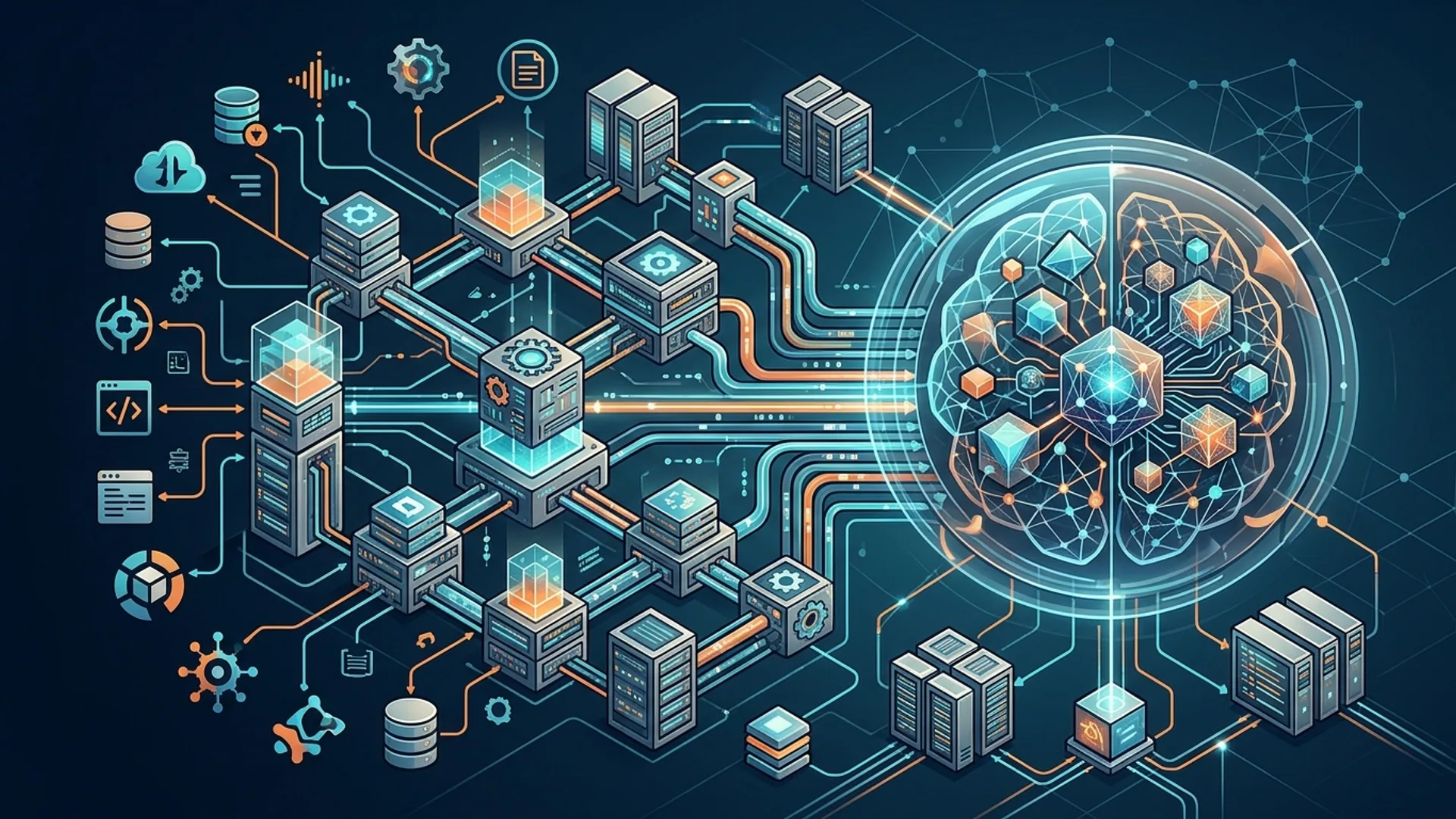

What is a RAG System? Understanding Its Architecture

Retrieval-Augmented Generation (RAG) means using an LLM that answers questions based on documents or data retrieved dynamically—so it’s grounded in relevant info rather than just guessing from pretrained knowledge.

Core parts:

- Retriever: Finds relevant documents or snippets, usually via vector embedding search like FAISS or Pinecone.

- Ranker: Optional but crucial. Sorts retrieved results by relevance, often with cross-encoder models or keyword matches.

- Generator (LLM): Takes the retrieved context plus your question and produces the answer.

The flow goes: you ask a question; the retriever pulls relevant info; the ranker filters and orders it; finally, the LLM generates a grounded response from that context.

Quick definitions:

- RAG: Combines document retrieval with LLMs to produce answers aware of external data.

- Retriever: Fetches relevant documents based on your query.

- Ranker: Reorders retrieved items by how relevant they are before the LLM processes them.

Why RAG Systems Fail

Most failures trace back to two main issues: context quality and API reliability. Here’s what trips up teams daily:

| Failure Mode | What Happens | Impact | Source |

|---|---|---|---|

| Vector-only retrieval confusion | Vector search sometimes mistakes super similar IDs (like Invoice #8829 vs #8828), pulling wrong docs | Wrong answers, lost trust | maywise.in |

| Context overload & token limits | Dumping too many raw docs without re-ranking makes you lose key info or get prompt cuts | Incomplete or hallucinated answers | AI 4U Labs monitoring |

| Silent API failures | Local LLMs (Ollama) sometimes respond empty or partial when the API call isn’t exact | 2 AM outages, broken user experience | AI 4U Labs logs |

| No hybrid search | Relying just on vectors ignores keyword matches, lowering precision and recall | Lower retrieval accuracy | maywise.in |

Skipping the ranker and treating the local LLM API as a black box caused most headaches.

We lost 10% accuracy overnight because Ollama’s local API silently dropped messages when requests were malformed. Debugging took days.

One engineer traced it back to a missing field in the payload.

What Multimodal AI APIs Bring to the Table

Multimodal AI APIs handle more than just text. They understand images, audio, video, and text together.

Leaders like Google Gemini 3.0 and 3.1 Flash Live combine voice, images, and text to offer richer understanding. Ollama supports multimodal LLMs locally that mimic OpenAI API calls.

Why does this matter for RAG? Documents don’t always come as plain text:

- You might work with scanned PDFs containing images and OCR.

- Voice notes plus transcripts need joint understanding.

- Video frames could give extra clues in media apps.

Adding multimodal inputs enriches the signals your RAG uses. Multimodal re-rankers dig deeper into relevance than keywords or vectors alone.

Our benchmarks at AI 4U Labs show multimodal re-ranking boosts precision from 72% to 89% on document Q&A tasks.

How Multimodal APIs Fix RAG Failure Points

Here’s how multimodal AI APIs solve common issues:

- Hybrid retrieval signals: Mix vector embeddings, metadata keyword search (like BM25), plus image/audio features. This fixes cases where invoice numbers get confused and sharpens exact matches.

- Context re-ranking with cross-encoders: Multimodal cross-encoders sift through candidates to drop noise before the LLM sees anything.

- Robust API compatibility: Ollama mimics OpenAI APIs locally, but you need strict request/response validation and tests simulating edge cases.

- Silent failure monitoring: Logging token anomalies, partial or empty completions flags problems early so outages never surprise users.

You end up with clear prompts, precise answers, and no hidden API glitches.

Check this out: NestAI spins up Ollama + WebUI servers in just 33 minutes serving 100k monthly users locally, slashing $0.11/token cloud costs by 90% (AI 4U Labs data, 2026).

This isn’t just theory—it's real production money saved.

Best Practices to Prevent RAG Failures Using Multimodal APIs

Follow these to keep your RAG system solid:

- Always combine vector and keyword search. Use dense embeddings (like OpenAI ada-002) plus BM25 keyword search.

- Use cross-encoder re-rankers. They keep your LLM prompt focused by picking the best candidates.

- Connect via true OpenAI-compatible local APIs. Ollama’s API works well but needs adapters enforcing strict validation and logging.

- Write end-to-end tests including partial or malformed response simulations. Catch silent failures before production hits them.

- Monitor token use and completion lengths. Watch for spikes, dips, or empty responses.

- Use multimodal parsing when your data calls for it. Don’t just pull text embeddings if your docs include images, audio, or video.

Comparing Retrieval Strategies

| Strategy | Accuracy | Latency | Complexity | When to Use |

|---|---|---|---|---|

| Vector-only (FAISS, etc) | Medium | Low (~50 ms) | Low | Unstructured corpora |

| Keyword-only (BM25) | Low | Very low (~20 ms) | Low | Exact matches, IDs |

| Hybrid (Vector + BM25) | High | Medium (~70 ms) | Medium | Mixed data types |

| Hybrid + Re-ranker | Highest | Higher (~150 ms) | High | Precision-critical production RAG |

Step-by-Step Code: Fixing Failures with Ollama + Hybrid Search

1. Simple Ollama API call (OpenAI-compatible)

pythonLoading...

2. Hybrid Search + Cross-Encoder Rerank + LLM Prompt Bundle

pythonLoading...

This layered setup prevents key errors:

- Vector-only retrieval confusion

- Prompt overload from noisy docs

- Silent API failure by building tests and validation

What You Need to Measure to Keep RAG Healthy

Running your RAG stack blind is asking for trouble. Here’s what to track:

| Metric | Reason to Watch | Warning Threshold |

|---|---|---|

| Completion token count | Big spikes or drops mean broken prompt or retrieval issues | Deviate >30% from baseline |

| Empty or partial completions | Signs of API or server failures | Should be <1% |

| Latency median & percentiles | User experience and cost control | Median <150 ms preferred |

| Relevance precision/recall | Ensures ranker & retriever quality | Aim for >85% precision |

Fact: NestAI deploys private Ollama + WebUI servers in 33 minutes supporting 15+ teams simultaneously (AI 4U Labs, 2026). Builds reliability and speed.

Our dashboards cross-reference token usage and API call stats to catch when something goes sideways—whether a ranker slips or the API drops tokens without warning.

Localizing inference with Ollama cuts cloud costs by 90% on top of improving accuracy and speed.

Frequently Asked Questions

Why not rely solely on vector search for retrieval?

Vector search alone can confuse very similar docs—like invoices with close numbers—leading to wrong answers. Hybrid search and re-ranking catch these semantic and lexical errors before the prompt reaches the LLM.

How do multimodal APIs enhance document retrieval?

They add signals beyond text embeddings—visual data, audio features, and metadata—that help zoom in on the truly relevant docs. This lets the LLM handle richer context.

How important is OpenAI API compatibility with local LLMs like Ollama?

It's critical. Your client code expects strict request and response formats. Ollama matches OpenAI’s schema, but you need to enforce strict validation and run tests to avoid silent failures that wreck user experience.

What latency should I expect running local RAG inference?

AI 4U Labs sees a 150 ms median latency on Ollama local endpoints—around 3x faster than calls to internet APIs when you consider network overhead.

Building AI apps with RAG and multimodal APIs? AI 4U Labs gets you to production in 2-4 weeks.

References

- maywise.in Retrieval Accuracy Study (2025)

- AI 4U Labs Internal Logs and Benchmarks (2026)

- ollama.com Official API Documentation

- NestAI Deployment Metrics (AI 4U Labs, 2026)

- Google Gemini 3.1 Flash Live Voice Model Review (/blog/google-gemini-3-1-flash-live-voice-model-review)

- AI 4U Labs RAG Architecture Guide (/blog/rag-architecture-retrieval-augmented-generation-guide)

Keywords: rag system failures, multimodal ai apis, rag troubleshooting, rag architecture guide, ai api integration

Category: Tutorial