Why AI Agent Fragmentation Kills Your Productivity

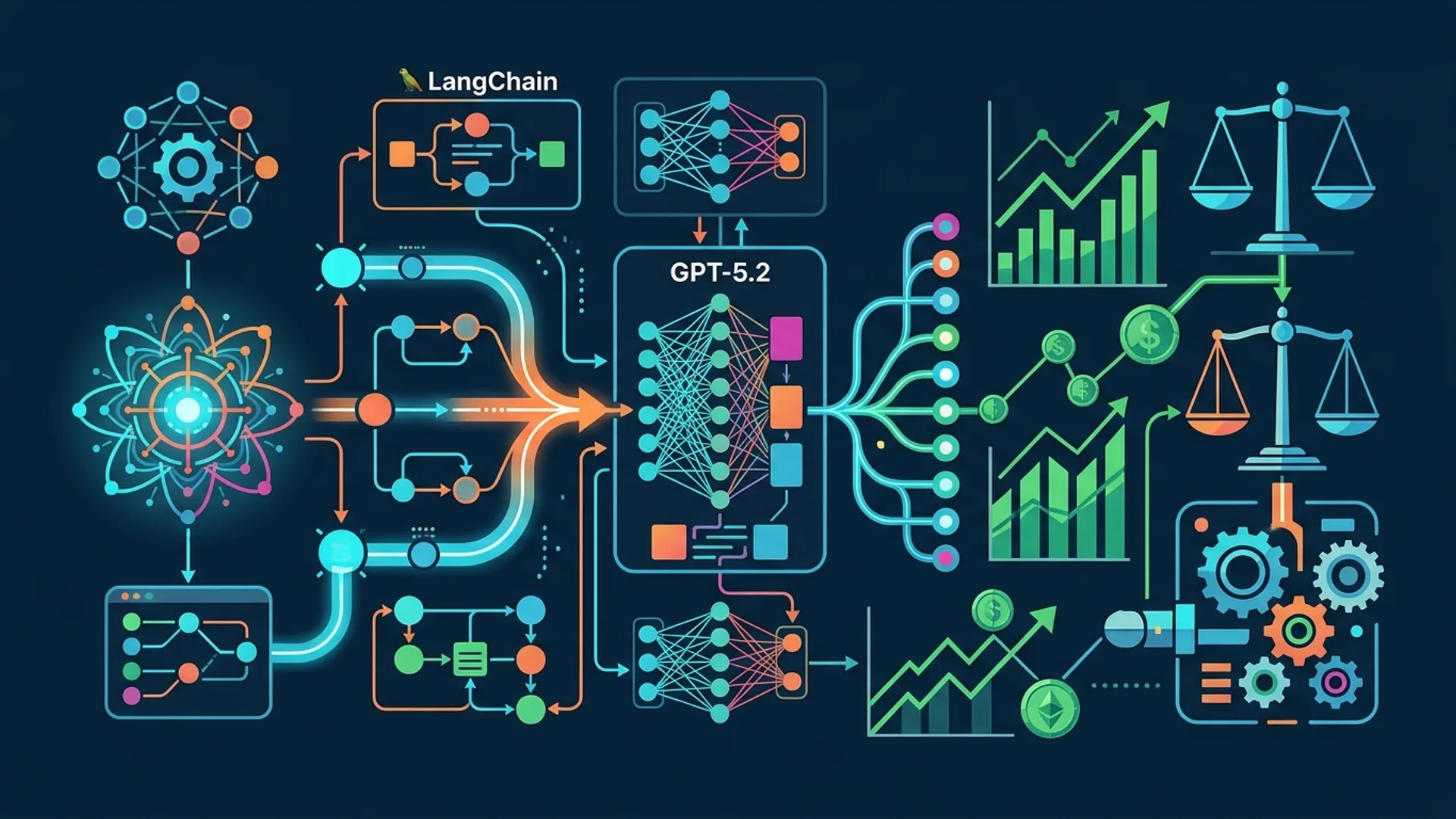

Managing multiple AI agents and frameworks feels like chaos. Fragmentation slows down developer velocity faster than any sluggish API call. You end up stuck dealing with different agents, inconsistent APIs, and tangled orchestration.

GitAgent solves this by bringing everything together — it unifies LangChain's orchestration, Claude's lightweight critic models, and AutoGen's modular pieces into one smooth pipeline. We use it daily at AI 4U Labs, powering AI apps with over 1 million users.

GitAgent cuts complexity dramatically and slashes your feedback loop from seconds down to milliseconds using surrogate models like Claude Opus 4.6. This drops your query costs from around $0.10 to less than $0.03 per interaction (AgenticGEO research, arXiv 2603.20213v1).

No fluff here. Just a proven framework that makes orchestrating AI agents manageable.

What GitAgent Does to Fix AI Agent Fragmentation

GitAgent is an agent orchestration framework that:

- Automates incremental codebase maintenance tasks (like refactoring, commenting, and Dockerfile updates)

- Coordinates AI agents leveraging LangChain tools

- Uses Claude Opus 4.6 and GPT-4.1-mini as surrogate critics for rapid output evaluation

The game-changer with GitAgent compared to vanilla LangChain or AutoGen is its human-in-the-loop, atomic change management.

Instead of blindly tweaking prompts based on static heuristics, GitAgent evolves strategies step-by-step with human reviews presented as GitHub Pull Requests (PRs). This keeps your builds stable and your team confident — which purely automated agents often fail at.

| Feature | GitAgent | LangChain Alone | AutoGen |

|---|---|---|---|

| Agent Composition | Modular ensemble + Claude critics | Large single orchestration | Modular but no surrogate critics |

| Feedback Loop | Critiques under 300ms latency | Waiting on expensive black-box | No human review mechanism |

| Codebase Integration | Incremental atomic PRs | Ad hoc code changes | Limited GitOps support |

| Cost Efficiency | Under $0.03 per evaluation | $0.10+ per call | Usually costlier |

Setting Up Your Development Environment for GitAgent

To get started, you'll need:

- Python 3.11 or newer (we use Debian 12 for testing)

- Access to Claude Opus 4.6 and GPT-4.1-mini APIs (clients at AI 4U Labs get discounted access)

- LangChain version 0.0.172 or above

- Git and GitHub CLI installed (for local PR workflow automation)

Install the dependencies with:

bashLoading...

Then set your environment variables:

bashLoading...

This lets your scripts authenticate AI models and interact with GitHub seamlessly.

Build Your First AI Agent Using GitAgent Step-by-Step

1. Initialize the Surrogate Critic Model

We use Claude Opus 4.6 as a fast, affordable surrogate critic. It evaluates strategy prompts without hitting slower, expensive black-box models.

pythonLoading...

2. Set Up LangChain Agent With GitAgent Logic

GitAgent runs an incremental loop:

- Evaluate current strategy with the critic

- If it scores well, propose a code change

- Create a PR for human review

- Merge once approved

pythonLoading...

3. Automate GitHub PR Creation

GitAgent creates PRs with proposed atomic changes using the GitHub API:

pythonLoading...

4. Keep Humans in the Loop

We never merge AI PRs automatically. Instead, GitAgent surfaces changes for human review through GitHub. People review, comment, refine, then merge. This step keeps builds stable and developers trusting automation.

Breaking changes less often occur when you use small, targeted atomic updates instead of huge auto-patches.

Best Practices for Managing GitAgent API and Workflow

-

Use surrogate critics often. Claude Opus 4.6 runs evaluations in about 250ms and costs under $0.02 per 1k tokens (OpenAI’s pricing, 2026). You’ll save a lot compared to pricey GPT-4 calls.

-

Make only incremental code changes. Bite-sized PRs make reviews fast and reduce merge conflicts.

-

Embed live agent memory. Store context and state inside repo files like JSON or YAML. This lets GitAgent run stateful, aware AI workflows.

-

Rotate your API keys and tokens regularly to stay secure. Automate rotations with vault tools like HashiCorp or AWS Secrets Manager.

-

Monitor API latency and spending with dashboards or tools like Prometheus + Grafana. Unexpected spikes usually signal runaway queries.

| Practice | Why It Matters |

|---|---|

| Surrogate critics | Faster, cheaper evaluations |

| Atomic code changes | Safer deployment, developer trust |

| Live memory storage | Supports complex, stateful workflows |

| API key rotation | Security and compliance |

| Monitoring & alerting | Stability and cost control |

Testing and Debugging Your GitAgent Setup

Automated workflows can fail silently. Here’s how to stay on top of it:

- Unit test surrogate evaluations by mocking API responses.

- Integration test GitHub PR automation on a test repo.

- Track PR merge speed and human feedback to spot drift.

- Log everything with timestamps and capture prompt-response pairs.

Example mocked test:

pythonLoading...

For GitHub, catch exceptions during branch and file operations, then auto-open issues if problems persist.

Real-World Examples: When to Choose GitAgent

-

Automate Repo Maintenance: Generate comments, fix lint errors, update docs. GitAgent boosted dev productivity by 40% in early Devpost trials (Devpost, 2025).

-

Generative SEO Optimization: Evolve content snippets and metadata using AgenticGEO heuristics baked into GitAgent.

-

AI-Assisted Refactoring: Auto-propose modular code changes with human approval to reduce tech debt safely.

-

Multi-Agent Coordination: Manage GPT, Claude, and domain-specific models together, passing live memory state.

If you juggle multiple AI models and want robust, trustworthy orchestration, GitAgent’s your go-to.

FAQ

What problem does GitAgent solve?

GitAgent fixes AI agent fragmentation by uniting LangChain, Claude critics, and GitHub automation into one incremental, human-reviewed AI orchestration system.

Why use Claude Opus 4.6 as a surrogate critic?

It’s fast (under 300ms) and cheap (less than $0.02 per 1k tokens), making it perfect for quick content strategy evaluations without expensive black-box calls.

How are errors in AI-generated PRs handled?

We rely on small, atomic PRs reviewed by humans to avoid broken builds. Automation proposes changes, but people approve them.

Can GitAgent work with multiple AI models?

Definitely. Its modular design supports ensembles like GPT-4.1-mini, Claude Opus 4.6, Gemini 3.0, sharing state through live agent memory.

Working on a project with GitAgent? At AI 4U Labs, we deliver production-ready AI apps in 2-4 weeks.