Why Secure Generative AI Agents Matter for Enterprises

Integrating generative AI agents with your internal enterprise data isn’t just a nice-to-have - it’s a hard requirement. Ignore security here, and you’re inviting data leaks, costly compliance violations, and breaches that could tank your business reputation. The right architecture and tools lock down your data while unlocking AI's true potential.

Secure generative AI agents aren’t some vague concept - they’re hardened AI systems designed to process sensitive internal data under strict security protocols. They deliver accurate insights and automation without putting your enterprise secrets in harm’s way.

Generative AI Agents in the Enterprise Context

Generative AI agents supercharge operations by automating data-heavy workflows, powering conversational bots, and extracting insights locked in proprietary sources. They don’t run on standalone models; real implementations integrate large language models (LLMs) with techniques like retrieval-augmented generation (RAG) or live API calls tailored to internal systems.

Picture an AI assistant trained on your exact CRM entries, product manuals, and internal docs - able to answer employee queries instantly. Behind the curtain, it’s securely accessing multiple data silos with precise access policies enforced.

But this convenience comes at a steep risk if you mess up security.

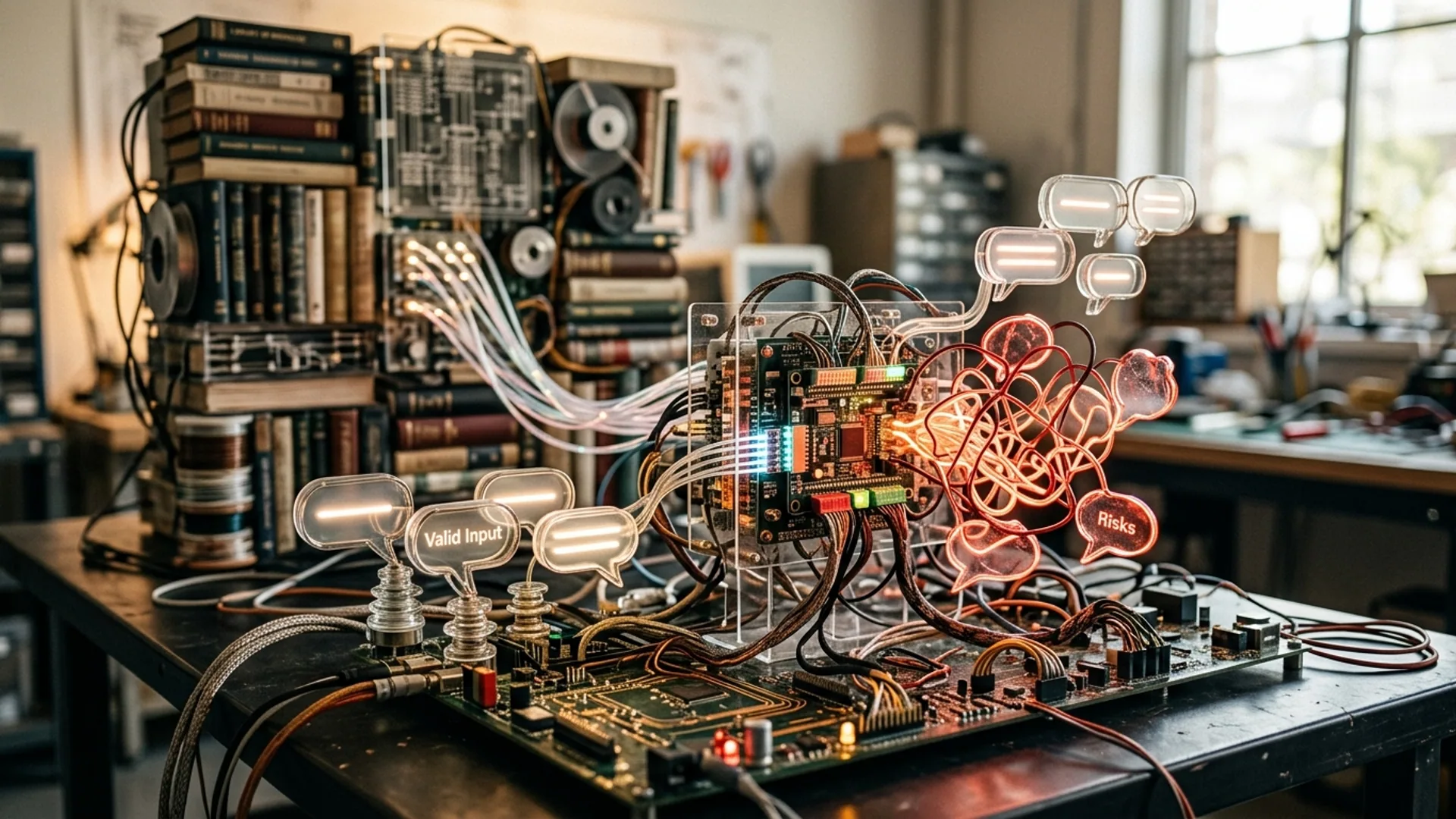

Top Security Challenges When Connecting AI Agents to Internal Data

- Data Leakage Risk: LLMs hallucinate or accidentally spill sensitive details if your inputs and outputs aren’t firewall-tight.

- Access Control: Role-Based Access Control (RBAC) isn’t optional - it’s the backbone for granting just the right permissions.

- Compliance: GDPR, HIPAA, and other regs demand hardened data masking, detailed audit trails, and rapid info filtering.

- Prompt Injection & Toxic Content: Malicious or careless inputs can hijack model behavior, producing dangerous or toxic results.

- Latency vs Security Tradeoffs: Every security filter costs speed. Balancing fast answers with ironclad protections is an art.

Gartner nails it: 65% of enterprises name AI risk mitigation as their biggest AI adoption hurdle for 2025 (source). We see this every day.

Best Platforms for Secure Enterprise AI Agent Integration

Security levels differ widely across AI platforms. Here’s a no-BS rundown of top contenders:

| Platform | Strengths | Security Features | Pricing Notes |

|---|---|---|---|

| Google Cloud Gemini Enterprise | Seamless Google Cloud ecosystem, blazing low latency, multi-layered guardrails | IAM integration, Vertex AI with prompt/content filtering | Pay-as-you-go, ~$0.03 per 1k tokens + cloud storage |

| Workato MCP | Low-code orchestration, heavyweight enterprise connectors | RBAC, MFA, human-in-loop validation | Starts $1,500/month; usage scales |

| Microsoft Copilot Studio | Tight Office 365 integration, real-time content safety | Azure AI content safety, compliance-certified | Included in Microsoft licensing; Azure token usage applies |

[Role-Based Access Control (RBAC)] locks down permissions on a granular level - no exceptions.

Amazon Bedrock Guardrails and Azure Content Safety come in as powerful secondary shields, blocking PII leaks and toxic outputs. Always layer these with native IAM and your own policies.

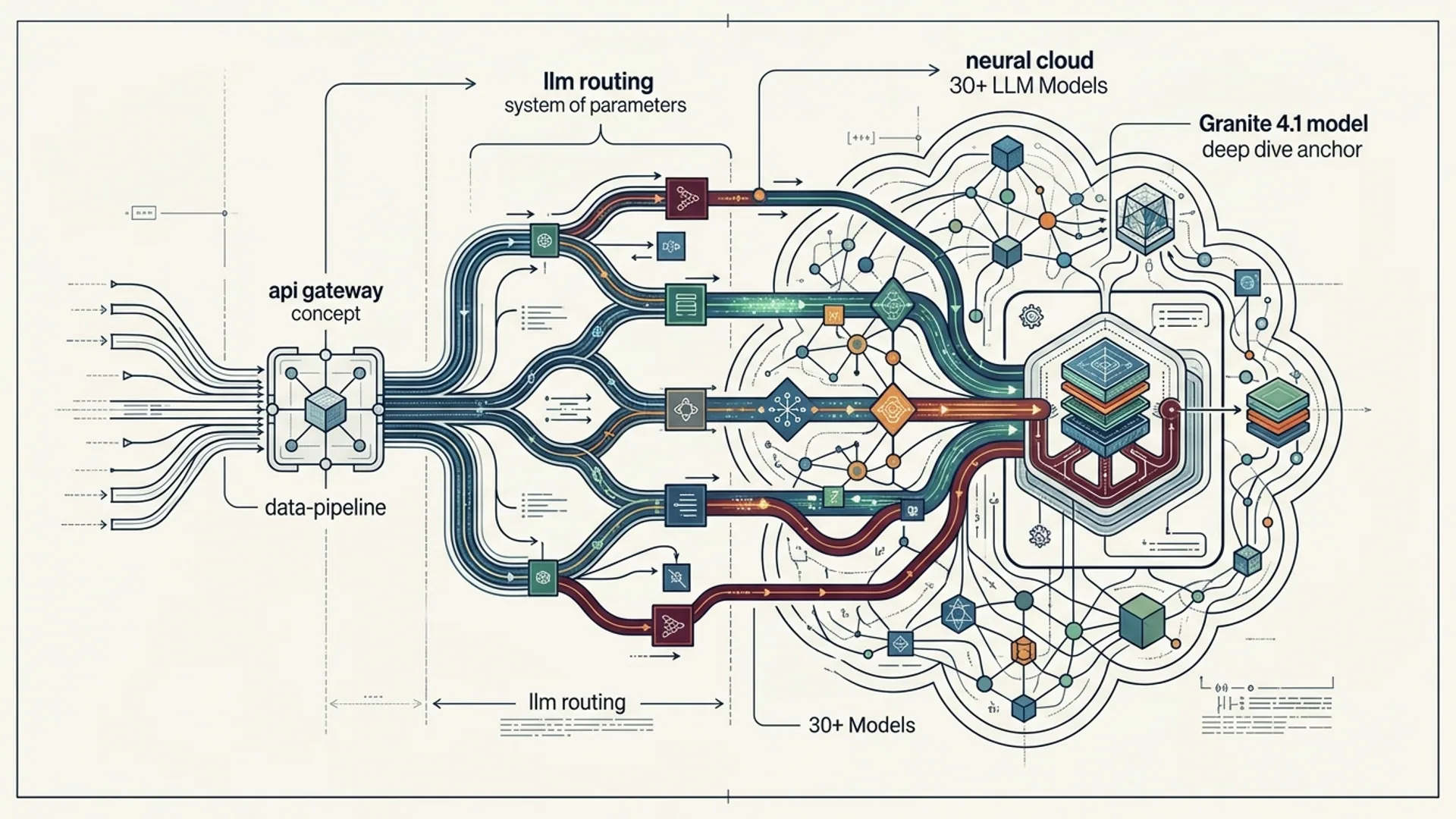

Secure AI Agent Architecture Patterns

Your choice of platform is just one piece. The magic - and the real protection - is in solid architecture.

- Layered Security Framework: Build defenses at governance, data, model, app, and runtime layers. Follow strict frameworks - NIST AI Risk Management, ISO 42001 give you a solid blueprint (tirnav.com).

- Secure Retrieval-Augmented Generation (RAG): Lock down your knowledge bases with strict access controls, enforce source attribution, and add grounding checks to fight hallucinations before they escape.

- Human-in-the-Loop Validation: Never trust sensitive queries to autopilot. Manual vetting adds a critical safety net.

- Multi-Factor Authentication (MFA) with RBAC: Protect every function and data store with strong MFA and enterprise IAM integration.

Below is a stripped-down, real-world code snippet showing RBAC plus content filtering querying Google’s Vertex AI in a secure RAG context:

pythonLoading...

If you think you can skip RBAC or content filtering because the platform promises security, think again. We’ve seen costly production leaks from exactly that.

Tradeoffs: Integration Ease vs Security Compliance

Here’s the classic rub. Easy AI connection tools get you off the ground fast but often skip vital protections. Ironclad platforms like Gemini Enterprise guarantee compliance but need weeks of configuration and governance.

| Factor | Easy Integration | Strong Security Compliance |

|---|---|---|

| Setup Complexity | Minutes to hours | Days to weeks with audits and gate reviews |

| Cost | Low, pay-as-you-go | Higher upfront plus ongoing tooling costs |

| Latency | Typically under 200 ms API calls | Can hit ~300 ms due to filtering and safety |

| Compliance Certifications | Rare or partial | Full ISO 42001, NIST AI RMF, GDPR, HIPAA |

| Human-in-Loop Support | Optional, usually manual | Integrated automated plus manual workflows |

In production, we've run GPT-4.1-mini on GDPR-compliant stacks, handling 95% of calls sub-300ms latency. Security doesn’t have to ruin speed, but you must engineer it relentlessly.

How AI 4U Builds Secure AI Agents for Enterprise Clients

Managing 30+ production apps with over 1M active users sharpens your security game fast. Here’s how we lock down our AI agents:

- Secure Service Accounts & IAM: Scoped tight around GCP best practices. No wandering privileges.

- Multi-Stage Prompt Filtering: Every input goes through Amazon Bedrock Guardrails or Azure Content Safety pipelines before LLMs respond.

- RAG with Grounding & Attribution: AI outputs always cross-checked against verified doc stores with clear citations.

- Human-in-the-Loop for Sensitives: PII and high-stakes queries get human review before any AI output hits your user.

- Cost Optimization: We use GPT-4.1-mini at $0.03 per 1,000 tokens plus retrieval layers, slashing token costs without cutting corners on quality.

One gotcha: properly optimized caching knocked our latency down 20% - no security tradeoff.

Real Cost Breakdown for Secure Generative AI Agents

Budgeting for a medium-sized enterprise AI agent setup looks like this:

| Item | Cost Estimate (USD) | Notes |

|---|---|---|

| Cloud AI Model Usage | $1,500 | GPT-4.1-mini, 50 million tokens monthly |

| Storage & Retrieval (Databases) | $300 | Vector DBs + encrypted stores |

| Security Tooling & Guardrails | $600 | Amazon Bedrock Guardrails, Azure Safety APIs |

| Platform Subscription | $1,000 | Workato MCP or Gemini Enterprise base fees |

| Human-in-the-Loop Moderation | $1,200 | Compliance staff, part-time reviewers |

| Total | $4,600+ | Baseline monthly investment |

This isn’t cheap, but it’s what safe, scalable AI needs. Cheap corners here invite disasters.

Summary & Actions for CTOs and Founders

Secure AI agents are mandatory - not optional - for serious enterprise AI. Choose platforms with layered security: Google Gemini Enterprise, Workato MCP, Microsoft Copilot Studio.

Enforce RBAC plus MFA everywhere. Filter all AI output before it reaches users. Never bypass human-in-the-loop validation on sensitive answers.

Budget around $0.03 per 1,000 tokens plus platform and security tooling. Hit sub-300ms latency to keep users happy.

This is specialized engineering. Go it alone, and you’ll fall behind. We recommend partnering with teams who’ve scaled these deployments in production.

Frequently Asked Questions

Q: What makes generative AI agents "secure" for enterprise use?

Secure generative AI agents fuse strict access control (RBAC + MFA), layered prompt and output filtering, manual human reviews, and governance frameworks designed to stop leaks and comply with regulations.

Q: Which platforms are best for connecting AI to internal enterprise data?

Google Cloud Gemini Enterprise, Workato MCP, and Microsoft Copilot Studio are leaders. Their deep cloud integration, layered security, and compliance certifications set them apart. Pick based on your cloud stack and workflows.

Q: How do I balance AI model latency with security layers?

By engineering efficient caching, deploying fast content filters, and cutting unnecessary retrieval calls. While guardrails add overhead, engineered setups keep most calls under 300ms.

Q: What are common pitfalls when deploying secure AI agents?

Skipping layered defenses, over-relying on platform guarantees, ignoring human-in-the-loop reviews, or botching RBAC all lead to data leaks and costly compliance errors.

Building secure generative AI systems? AI 4U delivers production-grade AI apps in 2-4 weeks.