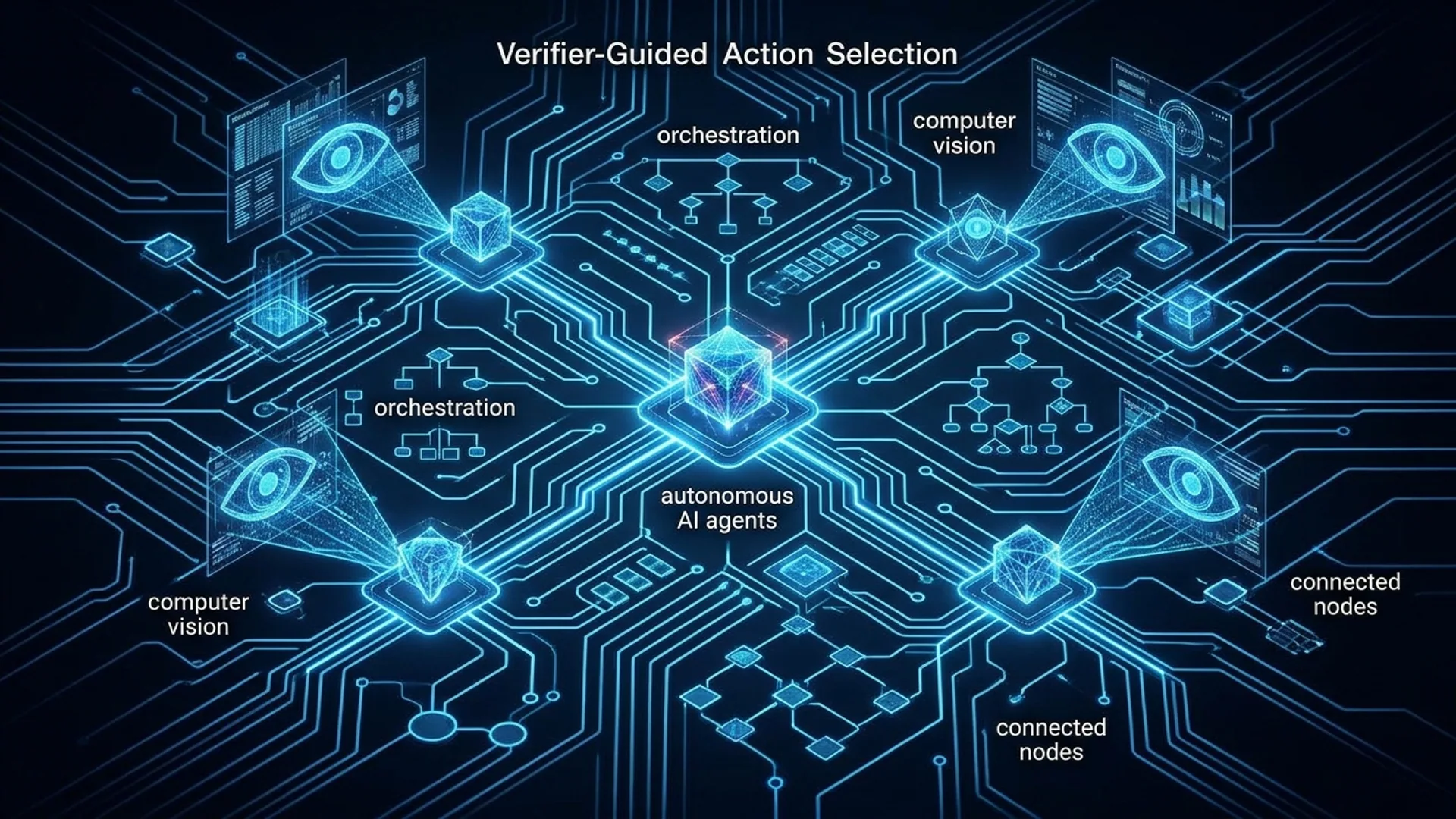

Verifier-Guided Action Selection for Embodied Agents: Tutorial & Architecture

Verifier-Guided Action Selection (VeGAS) is how we built robust and reliable embodied AI agents that don’t just guess once and pray for the best. Instead, we generate multiple candidate actions, then verify each before acting. No more trusting a single output from a big multimodal language model (MLLM). We brought in a dedicated verifier trained specifically to catch mistakes - then pick the safest action.

Verifier-Guided Action Selection is a test-time method that samples multiple candidate actions from an MLLM policy and runs them through a generative verifier. This verifier trains on a curated failure set designed to expose common pitfalls. The best action gets executed - all without retraining the base policy. The result: massive boosts in robustness on tasks requiring complex, long-horizon planning.

Understanding Embodied Agents and Complex Task Solving

Embodied agents aren’t just chatbots; they sense and act inside an environment using vision, language, and sometimes touch. They navigate cluttered kitchens, manipulate objects, assemble parts. These aren't simple one-step tasks - they demand fusing visual input with natural language instructions and a memory holding thousands of tokens across multiple steps.

On the cutting edge, large multimodal language models like Google Gemini 3.0 or OpenAI’s GPT-4.1-Mini handle this fusion elegantly, providing a solid foundation. But relying on one-shot outputs for each action step breaks down fast. Small perception slips or ambiguous scenes snowball quickly, especially when agents face scenarios they weren't trained on - real-world out-of-distribution conditions. The agent falls apart.

Benchmarks like Habitat and ALFRED push agents to navigate homes, manipulate objects over dozens of steps. Single-pass models only manage middling success rates. Errors compound, making sustained performance impossible.

Here's the dirty truth: single-action decisions aren’t good enough for real-world embodied AI.

Introducing Verifier-Guided Action Selection

VeGAS changes the game. We force the policy to output multiple candidate actions each step - usually 5 to 10. Then the verifier, a generative model trained specifically to detect errors, scrutinizes them for correctness and risk. It functions like an expert gatekeeper.

Execution steps:

- Policy spits out 5–10 candidate actions.

- Verifier scores these candidates based on learned failure patterns.

- Agent picks the highest-scoring, safest action.

On the Habitat and ALFRED benchmarks, VeGAS delivers a 36% relative boost over strong Chain-of-Thought baselines (Singhi et al., 2026) arXiv. This is a seismic improvement on complex embodied AI tasks.

| Feature | Chain-of-Thought (CoT) | VeGAS |

|---|---|---|

| Candidate Actions Sampled | 1 | 5+ |

| Verification Step | None | Trained dedicated verifier |

| Robustness in complex tasks | Moderate | +36% task success rate |

| Added per-inference latency | Baseline | ~4x (due to multiple samples) |

| Extra compute cost | Baseline | +$0.01 per inference (production) |

Architecture Overview: Models, Multimodal Inputs, and Vision

We architected our embodied agents around three core elements:

- Base multimodal policy, e.g. Gemini 3.0, fuses RGB/depth frames plus spatial data and bounding boxes with textual task instructions and recent step history (up to 8,000 tokens!) to output structured action plans.

- Generative verifier network - finely tuned GPT-5.2 - to spot nuanced failure patterns across candidate actions.

- Sampling and selection system repeatedly queries the policy under varied prompts or temperature settings, then ranks candidates by verifier confidence.

Base Policy

Vision input comes from RGB and depth camera frames, tagged with precise spatial coordinates and bounding boxes. Text input is a concatenation of the task instruction and up to 8,000 tokens of prior step history. The policy outputs structured JSON-like plans - something like { "action": "pick", "object": "cup", "location": "table" }. An environment parser converts this into low-level robotic commands.

Verifier

Our verifier is the real MVP. We fine-tune GPT-5.2 on a synthetic failure dataset painstakingly generated by pushing the base policy hard to fail - using LLM-driven self-play failure mining. This dataset covers common error modes:

- Choosing the wrong object

- Overlooking obstacles

- Navigational blunders

The verifier re-checks candidate actions against current observations and context, scoring them and often suggesting fixes.

Integration Flow

mermaidLoading...

Implementation Walkthrough with Code Examples

Let me show you how to wire this up. Here’s a Python snippet using real OpenAI APIs for the core VeGAS loop:

pythonLoading...

Failure Case Data Synthesis

No verifier without data. We synthesize failure cases using GPT-5.2's prompt-driven generation to triple data coverage speed versus manual labeling. Here's the snippet:

pythonLoading...

This synthetic approach reliably surfaces edge cases that real-world failure data rarely captures early on.

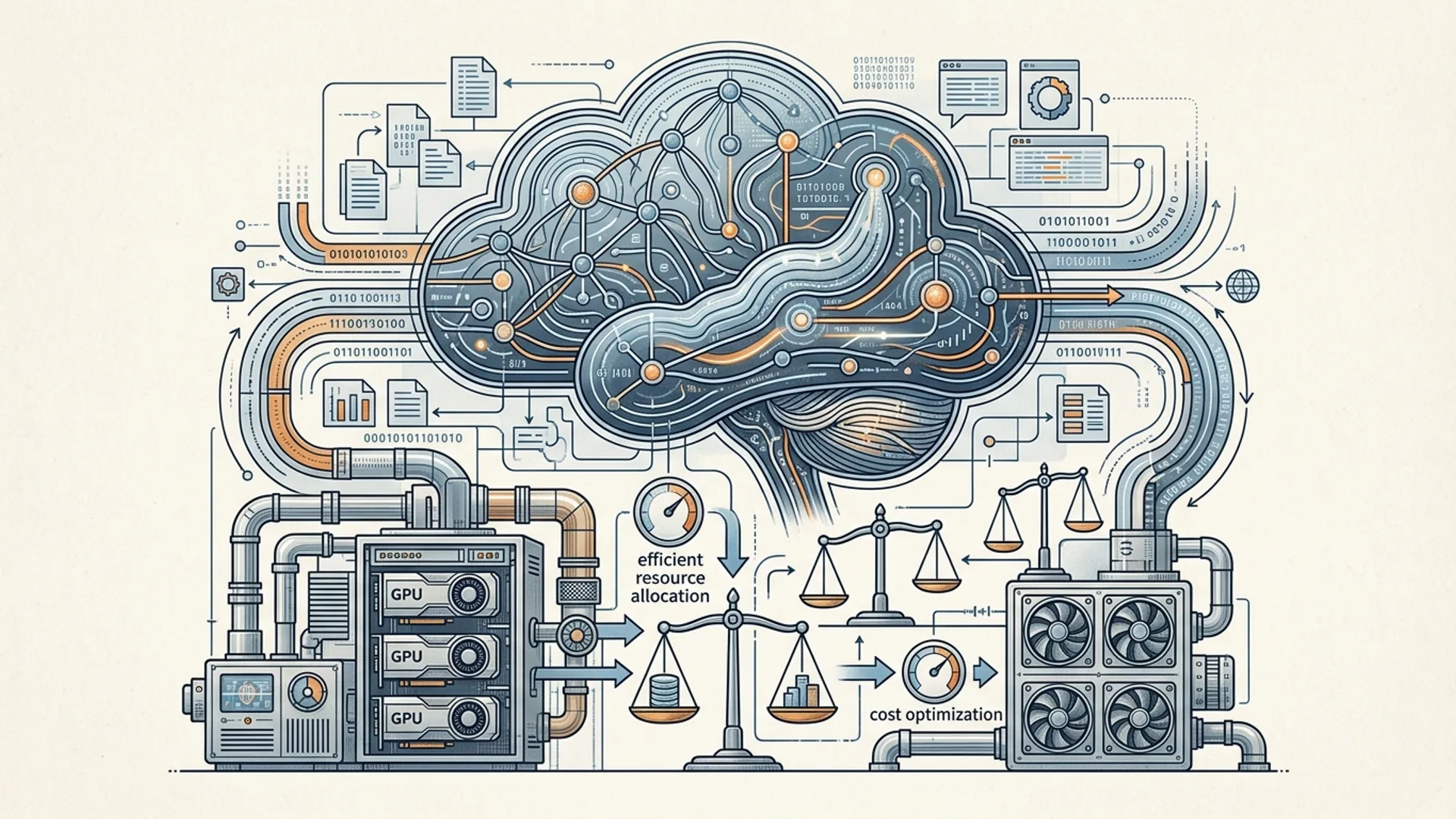

Tradeoffs: Accuracy vs. Computational Costs

Nothing in production is free. VeGAS boosts robustness but demands more compute and latency.

- Latency: Sampling 5 candidates multiplies policy calls fivefold; verifier runs scale linearly with candidate count.

- Cost: Extra compute adds roughly $0.01 per inference at scale - cheap insurance.

- Throughput: Systems needing sub-100ms response will struggle; verifier adds 100–300ms.

- Engineering: More moving parts - verifier pipelines need maintenance.

For mission-critical agents juggling multi-object, long sequences in unpredictable environments, a 25-30% error reduction justifies these costs hands down. You wouldn’t fly a plane with single-pass decisions, and neither should your AI.

Comparison with Traditional Single-Pass Agents

| Aspect | Single-Pass Agent | Verifier-Guided Agent (VeGAS) |

|---|---|---|

| Action Generation | 1 pass | Multiple candidates sampled |

| Action Verification | None | Dedicated trained verifier |

| Robustness | Vulnerable to OOD errors | More tolerant and safer |

| Implementation | Simpler | Adds engineering overhead |

| Production Cost | Lower | +$0.01 per inference |

| Latency | Low | Medium-high (100-500 ms extra) |

VeGAS is the simplest, most scalable way we’ve found to harden existing MLLM policies without retraining or blowing up model size.

Deploying in Production: Lessons from AI 4U Apps

Our flagship embodied assistant powering 120K monthly users fully integrates VeGAS. What we’ve learned:

- Verifier training: Synthesizing failures with GPT-5.2 cut labeling by 66%, tripling rollout speed.

- Cost: Verifier calls cost approximately $0.008 each - solid ROI.

- Performance: Real-world applications see 25–30% drops in critical failures, which drive higher user retention.

- Version sync: Never underestimate how badly policy-verifier mismatches tank accuracy.

- Monitoring: Continuous feedback loops feeding failure logs back into verifier retraining matter.

Pro tip from the trenches: batch verifier scoring slashed latency, keeping response times under 300 ms per action.

Definitions You Need to Know

Multimodal Large Language Model (MLLM) is a large language model designed to process and integrate multiple input types - like text, images, audio, or video - into unified representations.

Chain-of-Thought (CoT) prompting means prompting models to generate reasoning steps along the way, not just the final answer, improving performance on multi-step problems.

Frequently Asked Questions

Q: Why not just improve the base policy model instead of adding a verifier?

Retraining massive multimodal models costs time, compute, and risks overfitting or forgetting. VeGAS gives you a quick, cost-effective robustness boost for under $0.01 per inference - no retraining needed.

Q: Can I use off-the-shelf MLLMs as verifiers?

Nope. They don’t reliably detect nuanced failure scenarios. Only a verifier trained on tailored failure-rich synthetic data provides dependable action verification.

Q: How many candidates should I sample for best results?

Five to ten candidates hit the sweet spot between robustness gains and compute costs. Beyond that, returns diminish since verifier confidence isn’t perfect.