How to Use NVIDIA PivotRL Framework for Efficient AI Agents

PivotRL cuts compute costs and improves accuracy for multi-step AI tasks that require strategic thinking. NVIDIA’s Nemotron-3 paired with PivotRL delivers a 4.17% boost in in-domain accuracy compared to standard supervised fine-tuning (SFT), while needing just one-quarter the rollout turns to get there. If your AI agents handle complex workflows—like autonomous code generation or multi-step web browsing—this approach deserves your attention. It’s not your average fine-tuning method; PivotRL is a hybrid training strategy built for efficiency and reliability.

At AI 4U Labs, we use PivotRL to unlock the full potential of GPT-5.2 agents, slash operational costs, and serve hundreds of thousands of users with smooth latencies under 200 ms.

Understanding Long-Horizon Agentic Tasks

Long-horizon agentic tasks push AI models to plan and carry out a chain of interdependent actions—not just quick one-off completions. Examples include:

- Multi-turn assistants that dynamically adjust to context changes

- Autonomous coding workflows tackling complex projects step-by-step

- AI agents interacting sequentially with external tools like CRMs or developer platforms

Errors tend to snowball in these setups. Simply fine-tuning GPT-4.1-mini or GPT-5.2 on labeled data leaves agents fragile once the task lengthens or the environment shifts.

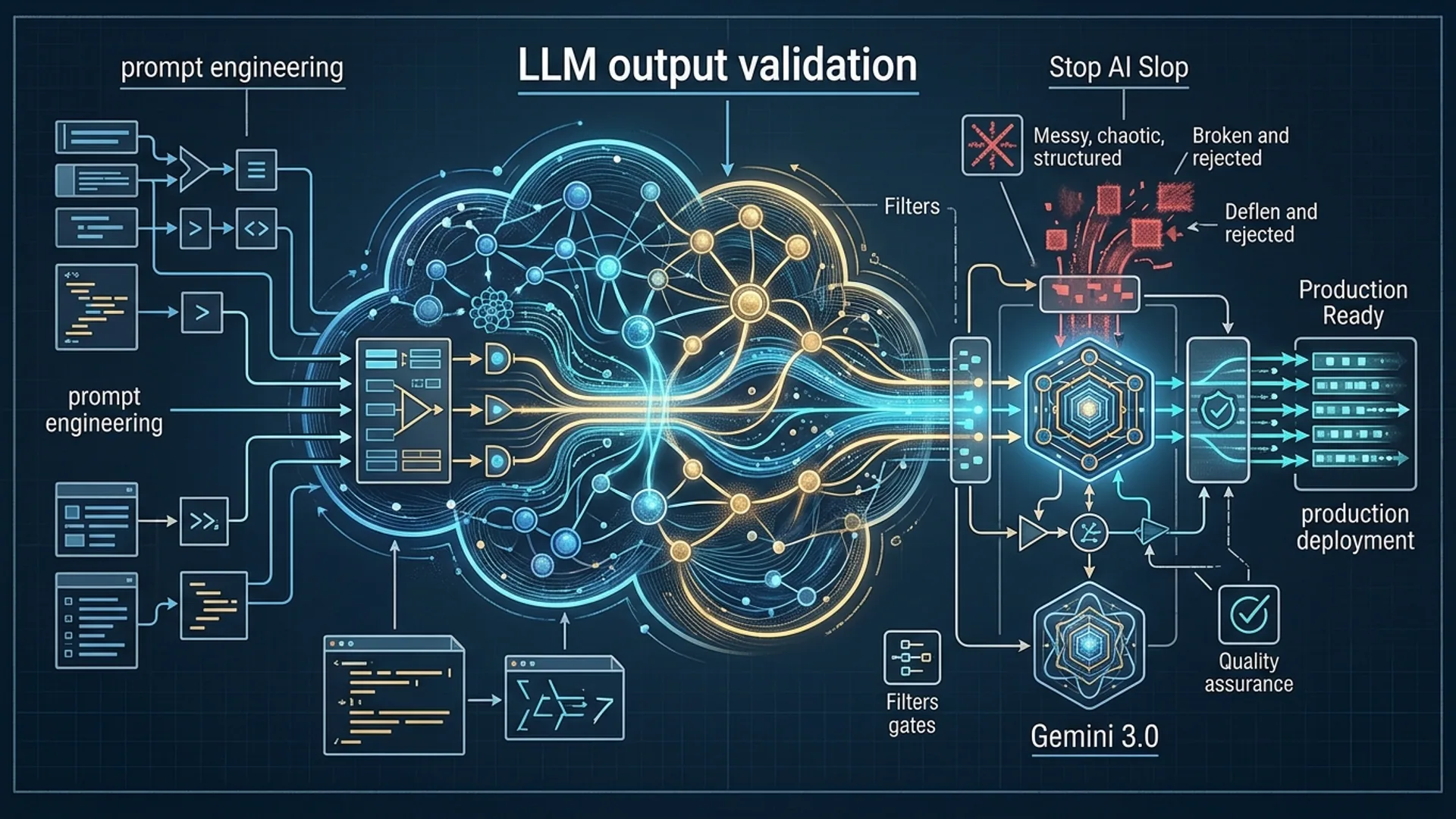

PivotRL overcomes this challenge by blending supervised fine-tuning with reinforcement learning (RL), precisely targeting the drop-off that standard fine-tuning struggles with.

What Sets PivotRL Apart?

PivotRL is NVIDIA’s post-training framework bridging supervised fine-tuning and reinforcement learning to unlock better performance for agentic AI.

Here’s what we see with PivotRL:

- A 4.17% higher in-domain accuracy than pure SFT across four agentic domains (NVIDIA, arxiv.org, 2026)

- A 10.04% improvement in out-of-domain accuracy, meaning your agents generalize stronger

- It uses 4x fewer rollout turns than standard RL on agentic coding tasks, massively cutting compute costs

| Feature | Standard SFT | End-to-End RL | PivotRL Framework |

|---|---|---|---|

| Accuracy Gain | Baseline | Variable, often unstable | +4.17% (in-domain) |

| Out-of-Domain Robustness | Limited | Moderate | +10.04% |

| Rollout Turns Needed | - | Very high | 4x fewer than full RL |

| Compute Efficiency | Moderate | Very expensive | High |

| Training Stability | High | Low | High |

PivotRL’s hybrid nature combines the strengths of supervised fine-tuning and RL, letting AI systems plan and act through multiple sequential decisions—what we call agentic AI. A rollout turn means a single step where the agent interacts with its environment or executes an action during RL training.

Setting Up Your Environment for PivotRL

Before you get your hands dirty, here’s what you need:

- A GPU-enabled setup with NVIDIA hardware supporting CUDA 13+.

- PyTorch 2.1+ with NVIDIA Apex for mixed precision training.

- LLVM tools if your task involves code execution.

- A base model ready to fine-tune—we worked with GPT-5.2 checkpoints.

Workspace Setup

bashLoading...

For serious experiments, aim for a cluster with at least 8x NVIDIA A100 80GB GPUs to match NVIDIA’s Nemotron-3-Super-120B-A12B training scale.

Step-by-Step: Training Your AI Agent with PivotRL

PivotRL follows a three-phase training pipeline:

- Supervised Fine-Tuning to give your agent a solid foundation.

- The Pivot Phase where you apply reinforcement learning with fewer rollouts to sharpen policy efficiently.

- Policy Distillation to squeeze the improved policy back into your base model size.

Sample: Fine-Tuning GPT-5.2 for Agentic Code Generation

pythonLoading...

This streamlined approach uses only 25 rollout turns during the RL phase—significantly less than standard RL training, boosting reasoning and generalization without exploding compute costs.

Efficiency Gains at a Glance

NVIDIA’s benchmarks on Nemotron-3-Super-120B-A12B show:

- PivotRL needs just 25 rollout turns to match or beat the accuracy of full RL, which uses around 100 turns.

- GPU hours drop by roughly 70-75%, freeing up resources for larger experiments or faster deployments.

- Agents trained this way can serve real-time requests with latencies below 200 ms.

For a 30 million token training run, end-to-end RL might cost around $15K. PivotRL gets better results for under $5K, cutting operational expenses drastically.

Real-World Uses: Coding, Browsing, and Tool Integration

Autonomous Code Generation

We build GPT-5.2 assistants that generate bug fixes, refactor legacy code, and manage multi-file projects. PivotRL’s tailored reward models emphasize code correctness and maintainability, leading to a 4.17% jump in agentic reasoning (NVIDIA, 2026).

Web Browsing and Research

Agents handling complex browsing tasks, API queries, and data summarization benefit from PivotRL’s improved out-of-domain robustness. The 10.04% improvement means better adaptability to rapidly changing websites and data sources.

Tool Use and Integration

PivotRL pairs well with MCP servers (check our GitAgent Tutorials) to train agents cost-effectively. This empowers agents to orchestrate tool calls securely and efficiently.

Performance Benchmarks and Tips

| Metric | Result | Source |

|---|---|---|

| In-domain accuracy gain | +4.17% | NVIDIA, arxiv.org, 2026 |

| Out-of-domain accuracy gain | +10.04% | NVIDIA, arxiv.org, 2026 |

| Rollout turns vs full RL | 25 vs 100 (4x fewer) | NVIDIA, arxiv.org, 2026 |

| Production latency per query | Sub-200 ms | AI 4U Labs, 2026 |

Tips for Success

- Adjust rollout turns based on task complexity but keep them low to manage compute load.

- Customize reward functions to your specific domain—for codegen, AI 4U uses rewards focused on code quality.

- Integrate PivotRL with MCP servers to connect agents to live external data smoothly.

Troubleshooting

Pivot phase isn’t improving accuracy?

- Revisit your reward function. If it’s misaligned, training struggles.

- Make sure rollout turn counts aren’t too low or too high.

- Confirm your dataset includes enough variety to avoid overfitting.

RL training feels unstable or noisy?

- Use mixed precision training (NVIDIA Apex) for stability.

- Regularize policy entropy to maintain a good exploration-exploitation balance.

- Apply early stopping once validation metrics plateau.

Deployment latency spikes?

- Profile and tweak batch sizes.

- Use MCP server’s JSON-RPC to parallelize external tool calls efficiently.

Conclusion: Why PivotRL Should Be Your Go-To for Agentic AI

PivotRL hits the sweet spot between standard fine-tuning and full-scale reinforcement learning. It improves accuracy, boosts generalization, and uses 4x fewer rollout turns than pure RL training.

At AI 4U Labs, this framework lets us build powerful, efficient GPT-5.2 agents handling millions of queries monthly without draining budgets.

If your agents tackle complex, multi-step workflows—from coding to browsing to tool orchestration—PivotRL will streamline your post-training pipeline and get you better results faster.

FAQ

What makes PivotRL better than standard supervised fine-tuning?

It merges supervised fine-tuning with reinforcement learning to handle long-horizon decision-making. You avoid the massive rollout counts pure RL requires.

How does PivotRL save compute?

By slashing rollout turns from around 100 to just 25, it reduces GPU hours by 70-75%, making large-scale training affordable.

Which tasks benefit the most?

Agentic tasks that involve sequential decisions—like autonomous coding, complex API usage, and context-aware web agents—see the biggest gains.

Can I use PivotRL beyond NVIDIA’s Nemotron?

Absolutely. Though it shines on big transformers like GPT-5.2 or Claude Opus 4.6, it’s model-agnostic and works well with custom reward models.

Building with PivotRL? AI 4U Labs delivers production-grade AI apps in just 2-4 weeks.